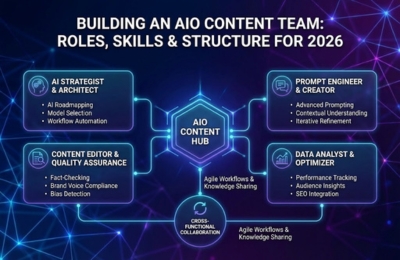

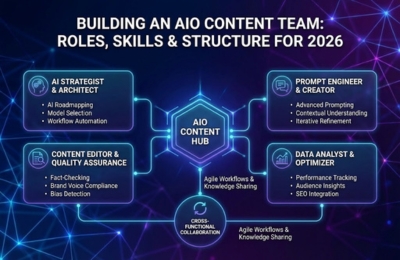

Building an AIO Content Team: Roles, Skills & Structure for 2026

Traditional SEO teams are not designed for AI-powered search ecosystems. To compete in 2026, CMOs must build a specialized AIO

Traditional SEO teams are not designed for AI-powered search ecosystems. To compete in 2026, CMOs must build a specialized AIO

AI-powered search has fundamentally changed how visibility, authority, and demand are created. Traditional SEO alone cannot support executive growth goals.

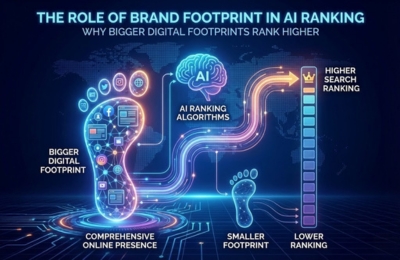

AI-powered search engines do not rank content in isolation; they evaluate brand footprint. Brands with larger, consistent, multi-platform digital footprints

AI content diversification is no longer optional for brands seeking authority in AI-powered search environments. Large language models increasingly reward

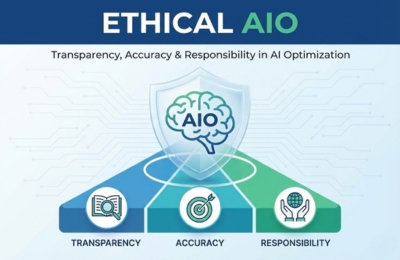

Ethical AIO focuses on optimizing content for AI systems without compromising truth, transparency, or fairness. Instead of manipulating models, it

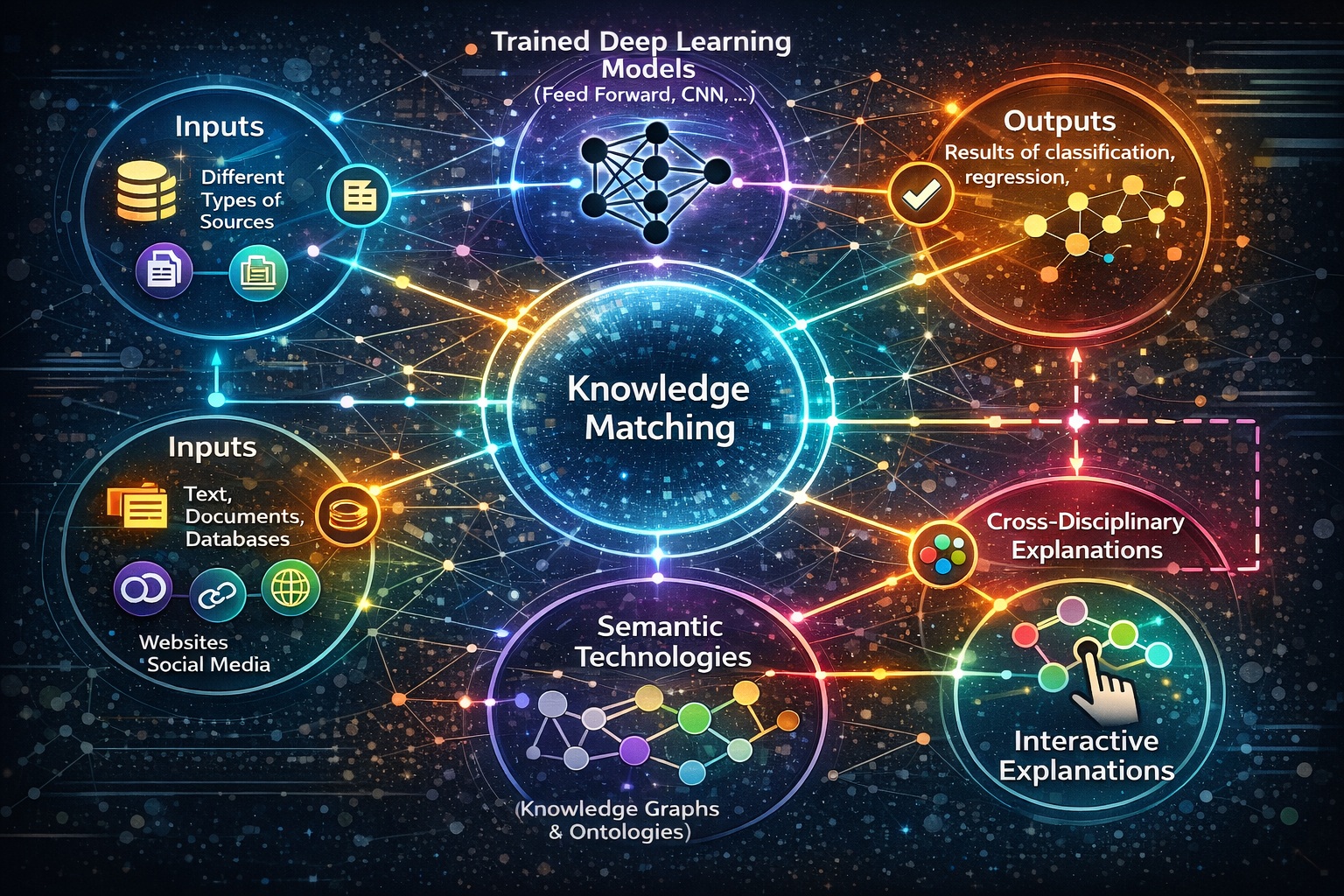

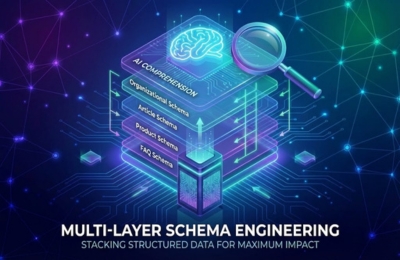

Multi-layer schema engineering is the practice of stacking multiple, purpose-built schema layers: entity, page and author, so AI systems can

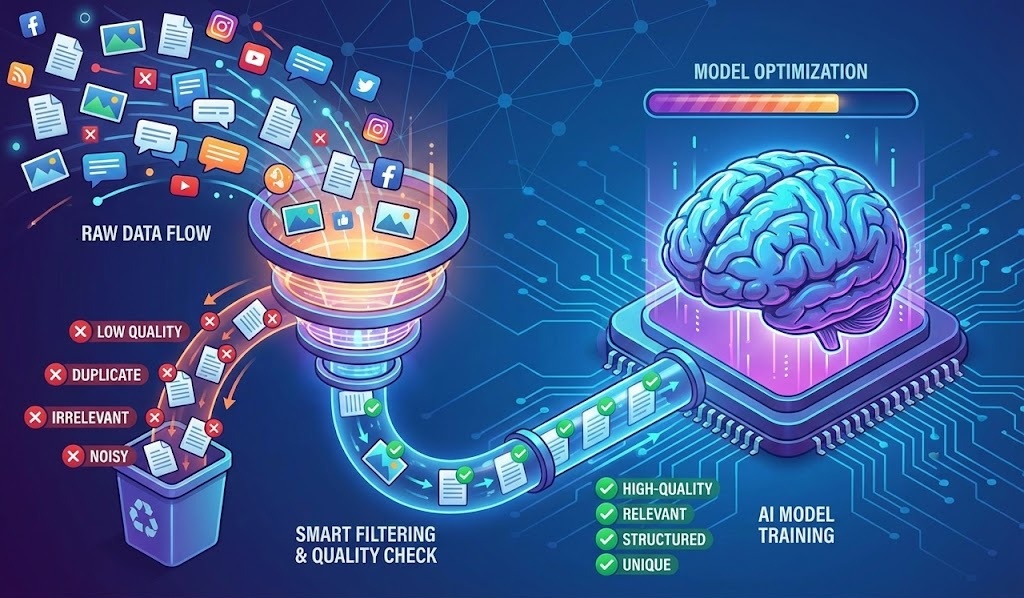

AI models do not absorb the entire web. They rely on strict training data filtering systems that decide which pages

AI systems do not cite sources randomly. They use attribution scoring models that evaluate trust, clarity, consistency and formatting signals

The generative search funnel replaces linear, click-based marketing funnels with AI-mediated decision paths shaped inside LLMs like ChatGPT, Gemini, and

Semantic reinforcement is how you deliberately teach AI systems what your brand means, not just what keywords you rank for.

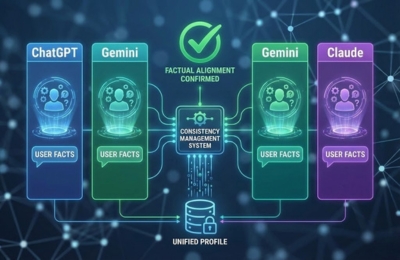

As AI-powered search becomes the primary discovery layer, brands face a new technical challenge: different large language models (LLMs) often

The AI feedback loop explains how large language models (LLMs) repeatedly reuse, reinforce and evolve trusted content over time. Once

Discover whether your website is visible in AI search platforms like ChatGPT, Gemini, and Google AI Overviews.