Future-Proofing AIO Strategy for AI Search in 2026 Onward

AI search will continue to evolve rapidly, but the fundamentals of authority, clarity, and trust remain durable. To future-proof AIO,

AI search will continue to evolve rapidly, but the fundamentals of authority, clarity, and trust remain durable. To future-proof AIO,

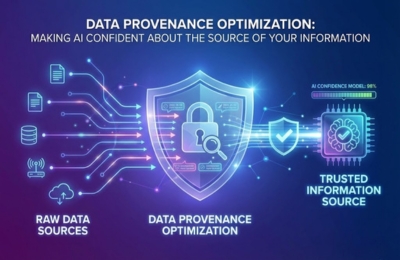

Data Provenance Optimization helps AI systems confidently understand where your information comes from, who created it and how trustworthy it

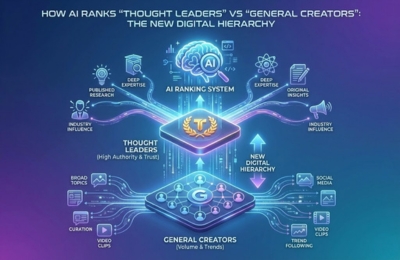

AI-powered search engines and Large Language Models no longer treat all creators equally. They apply authority scoring systems that distinguish

An AIO brand manual is the next evolution of brand governance designed not just for humans, but for AI systems

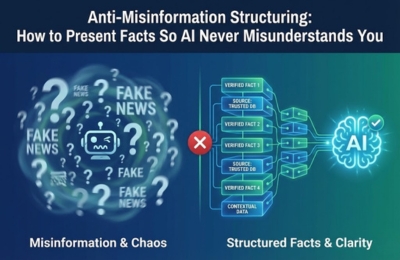

AI systems don’t “understand” facts the way humans do; they predict, infer and synthesize based on patterns. Poorly structured content

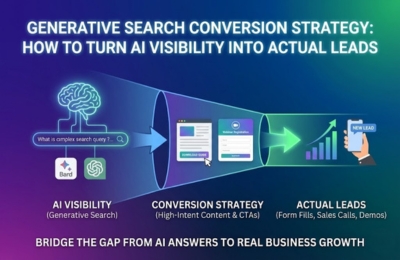

Generative search visibility alone does not drive revenue. This guide explains how a modern AI conversion strategy bridges the gap

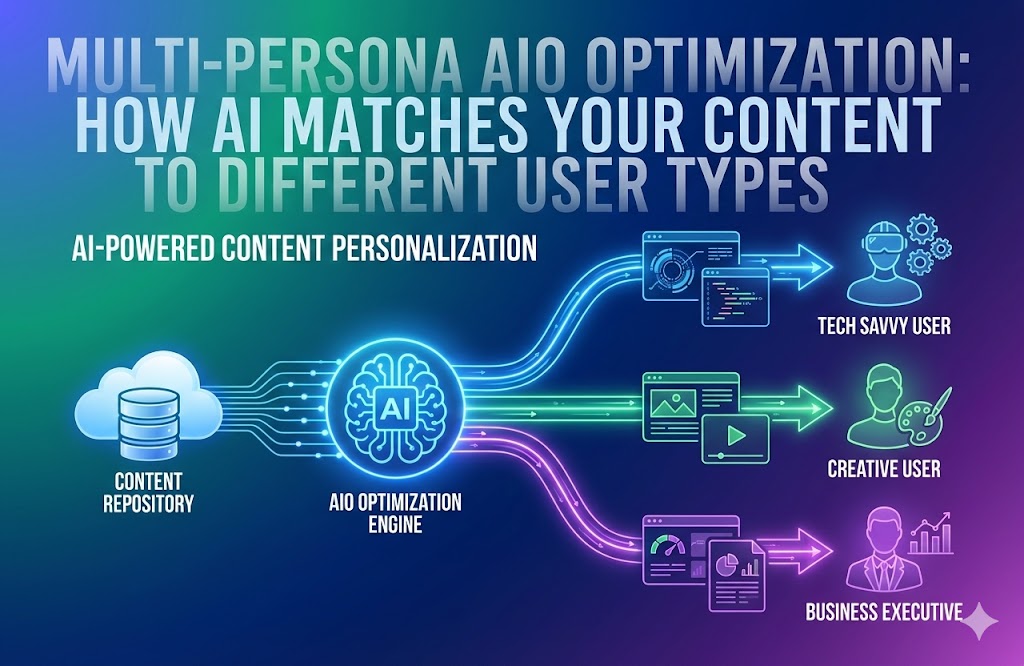

Multi-Persona AIO Optimization is about designing content so AI systems can adapt the same core information to different user types

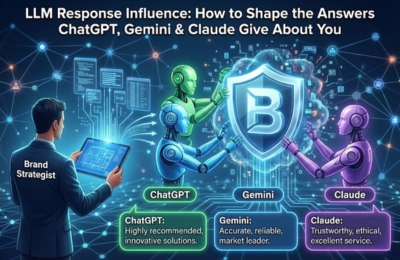

Large Language Models don’t “think”; they synthesize patterns from trusted signals. If ChatGPT, Gemini, or Claude are giving vague, outdated,

AI safety alignment is no longer optional for content teams operating in AI-driven search ecosystems. As Google and large language

AI-powered search engines and LLMs rank brands not just by content quality, but by trustworthiness. The trust layer AI evaluates

Cross-channel AI visibility is about training AI systems to recognize, trust and recommend your brand consistently across platforms. Instead of

AI Optimization (AIO) has transformed how content is created, structured and surfaced across search engines and LLMs. But AI alone

Discover whether your website is visible in AI search platforms like ChatGPT, Gemini, and Google AI Overviews.