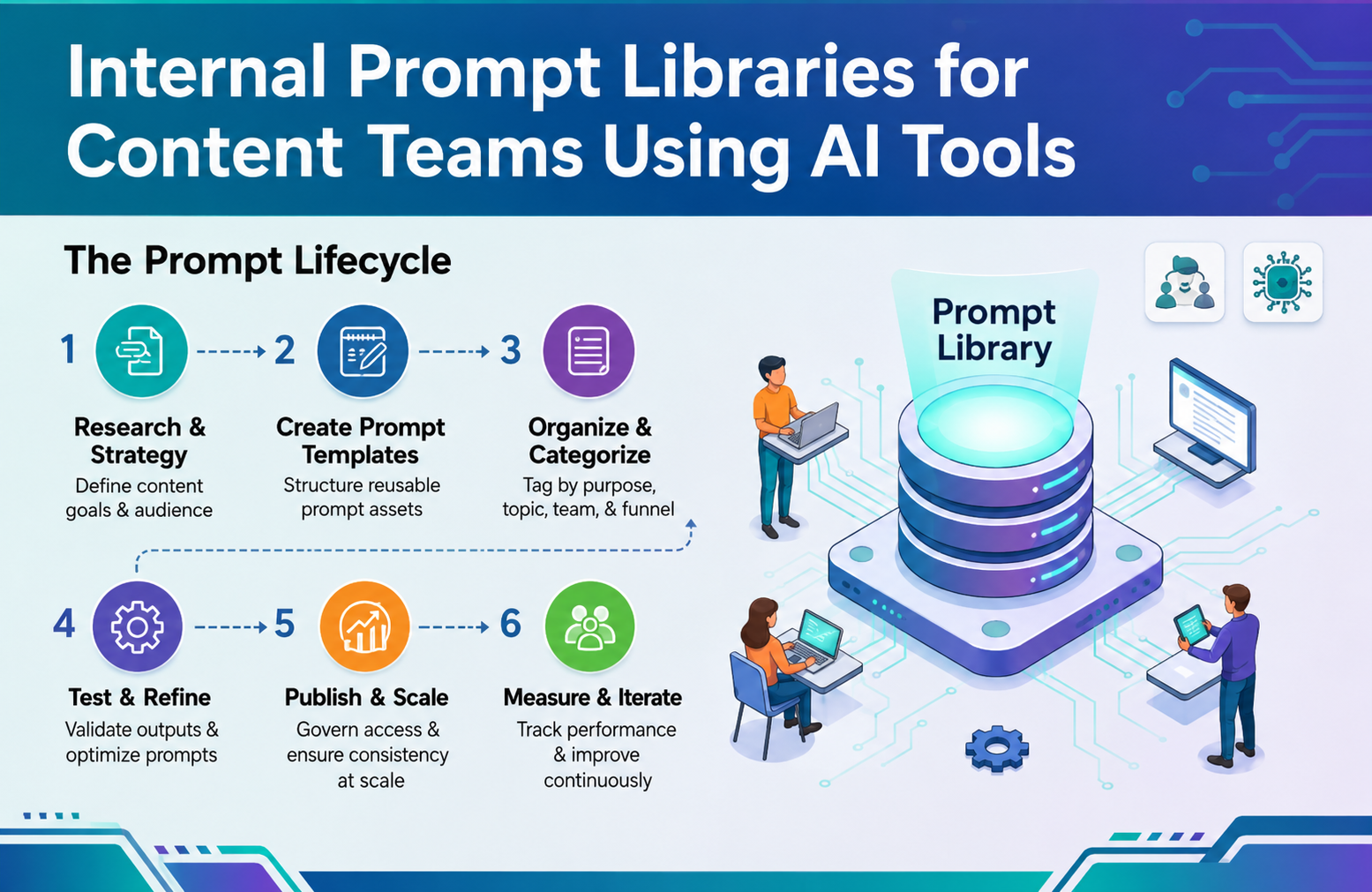

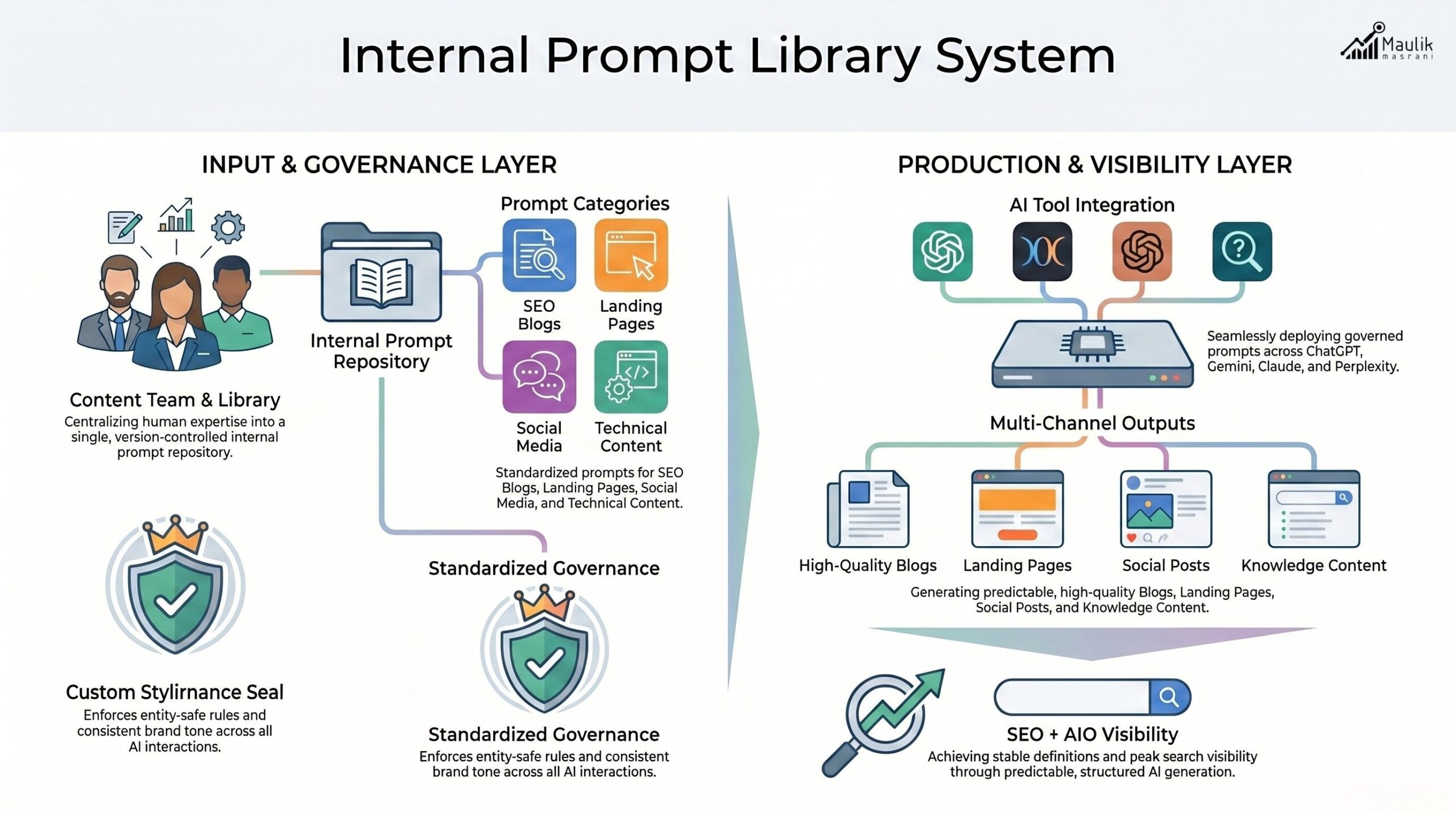

An internal prompt library transforms AI usage from chaotic experimentation into a structured, scalable content system. Instead of relying on random prompts, content teams build categorized, entity-safe, version-controlled prompts that protect authority, brand consistency and AI visibility. This operational framework ensures predictable outputs, measurable performance and cross-platform alignment while reducing hallucinations and brand drift.

Internal Prompt Libraries

AI tools have democratized content creation. But scale without structure creates instability.

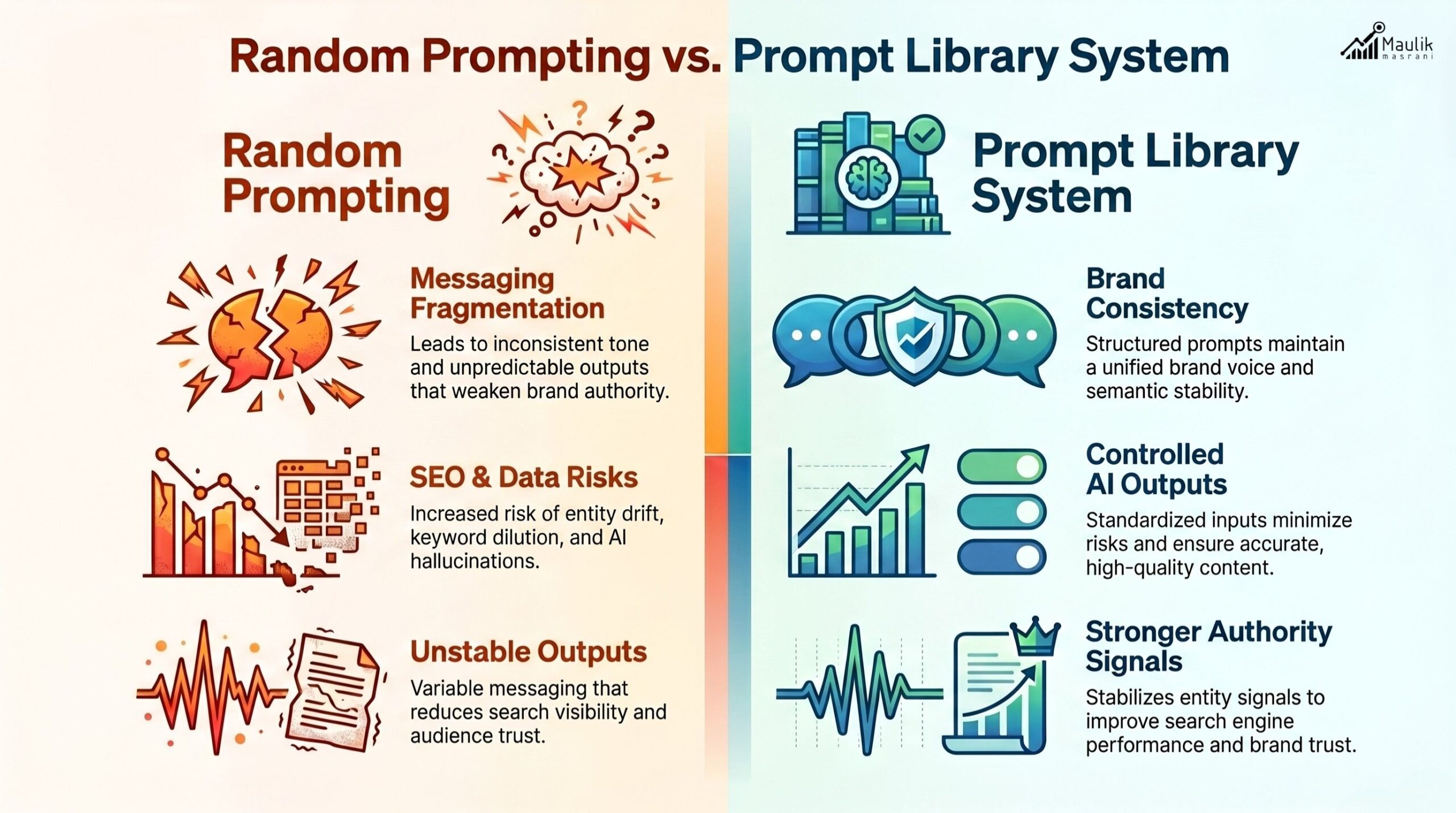

Many marketing teams rely on ad-hoc prompts copy pasted from Slack threads or improvised in real time. The result? Inconsistent tone, diluted authority, factual risk and unpredictable AI outputs.

A well-designed internal prompt library solves this problem. It operationalizes AI usage into a repeatable, governed and measurable system, especially for teams producing high-volume content across SEO, paid campaigns, social media and long-form assets.

Let’s break down how to build one correctly.

Why random prompting breaks authority

AI tools respond directly to instruction quality. Poor prompts generate vague outputs. Overly creative prompts produce inconsistent messaging. And missing guardrails increases factual risk.

In high-authority content environments, especially B2B, this creates four structural problems:

- Tone fragmentation: Every team member writes in a different voice.

- Entity inconsistency: Product names, service definitions and terminology vary across pages.

- SEO instability: Search engines detect topic dilution when language shifts unpredictably.

- Governance risk: No record of what instructions were used to generate public content.

Studies on prompt engineering workflows show structured prompting increases output consistency by up to 40% compared to open-ended inputs. In content marketing, consistency correlates strongly with brand trust and AI citation stability.

Without a defined system of AI content prompts for marketing teams, content production becomes improvisational.

Authority is not built on improvisation.

It is built on systems.

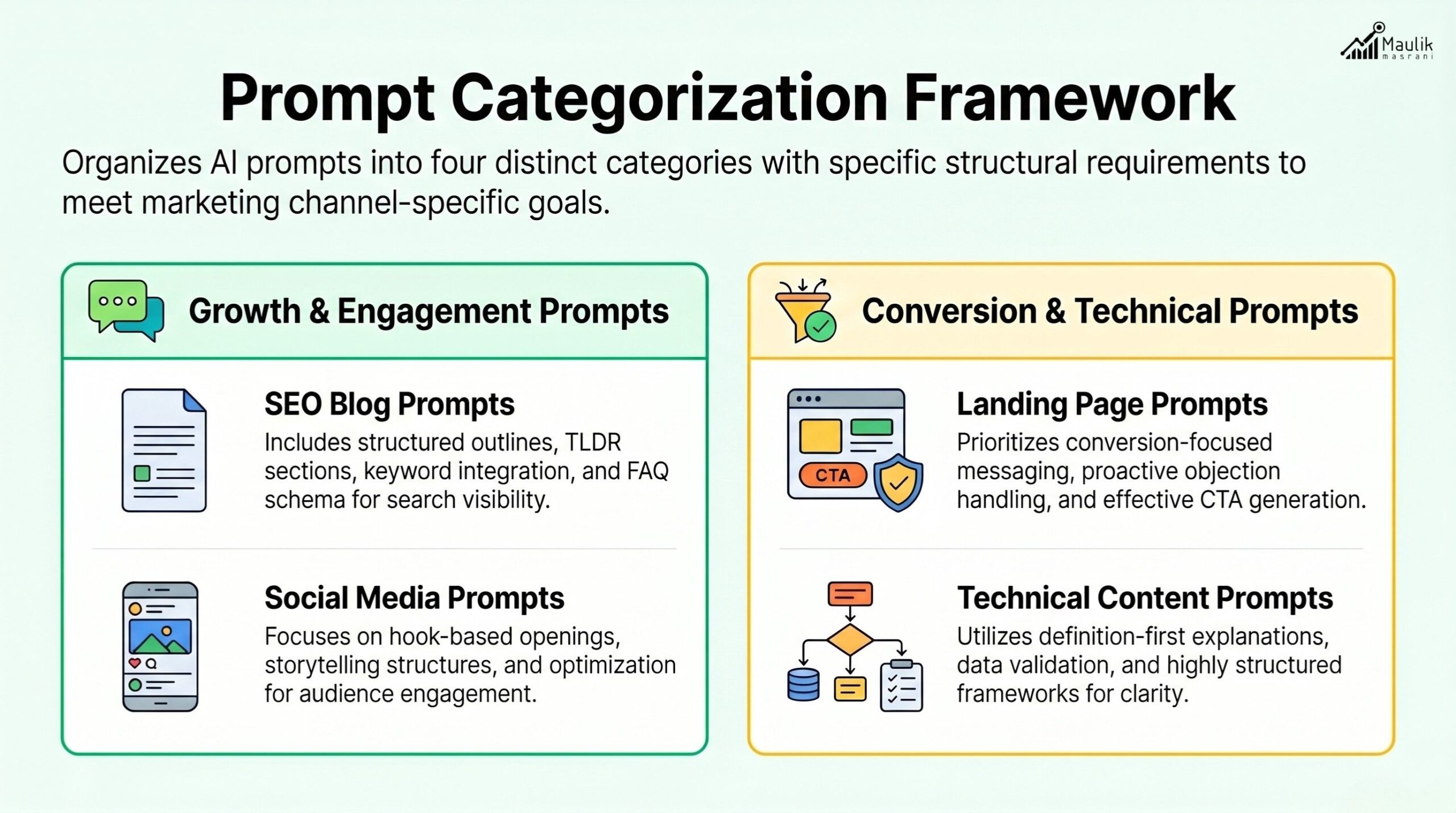

Categorizing prompts by content type

A high-performing internal prompt library is organized by content function, not stored as scattered documents.

Prompts should be categorized into structured modules aligned with SEO and AIO content production.

1. SEO Blog Prompts

- Long-form analytical structure

- TL;DR inclusion

- FAQ schema formatting

- Internal linking instructions

- Keyword integration logic

2. Landing Page Prompts

- Conversion-focused hero sections

- Objection-handling frameworks

- Structured benefit hierarchy

- CTA variation testing

3. Social Media Prompts

- Hook-first opening structure

- Carousel segmentation

- Executive insight formatting

- Data-backed storytelling

4. Educational or Technical Prompts

- Definition-first explanation

- Statistical validation instructions

- Structured comparison frameworks

Each prompt should clearly define:

- Role instruction (“Act as…”)

- Target audience

- Tone

- Formatting requirements

- Word count range

- Keyword placement logic

- Structural constraints (H1-H3, bullets, FAQs)

For example:

Weak prompt:

“Write about AI marketing.”

Structured prompt:

Write a 1200-word analytical blog for decision-makers. Include TL;DR, H1-H3 hierarchy, FAQ schema and keyword integration without stuffing for SEO and AIO alignment.

The second version creates predictability.

Predictability creates scalability in both SEO and AIO performance systems.

Entity-safe prompting rules

Entity instability is one of the most overlooked AI risks.

Large language models generate variations in naming, positioning and definitions unless explicitly controlled. For brands operating in competitive verticals, this causes semantic drift.

Entity-safe prompting includes:

- Always defining primary brand terms.

- Locking product names exactly as approved.

- Avoiding synonym substitution for core services.

- Reinforcing definition-first writing patterns.

- Embedding FAQ reinforcement rules.

Example:

Instead of:

“Explain our solution.”

Use:

“Define [Primary Product Name] exactly as: ‘A cloud-based AI visibility optimization platform.’ Do not alter terminology.”

This protects:

- Search engine semantic understanding

- AI model consistency across platforms

- Brand knowledge graph stability

Over time, entity-safe prompting increases alignment between your website content and how AI systems reference your organization.

For marketing teams managing complex portfolios, this step is non-negotiable.

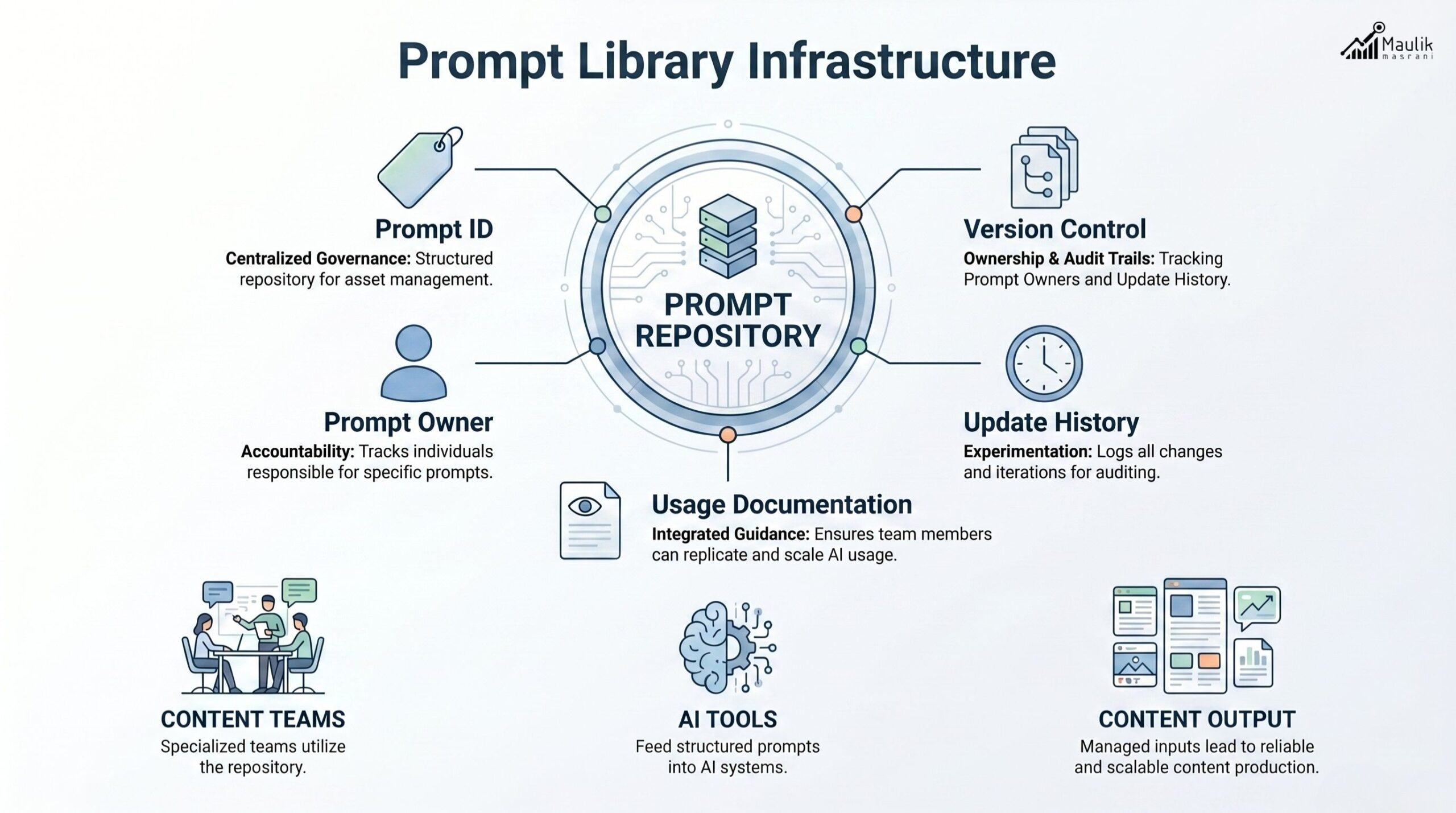

Version-controlled prompt repository

An effective internal prompt library must operate like code.

That means version control.

Prompts evolve. AI models update. Messaging changes. If you cannot trace which prompt version generated which asset, governance breaks down.

Recommended structure:

- Centralized repository (Notion, Git, or structured document management)

- Prompt ID numbering system

- Change logs

- Owner assignment

- Last updated timestamp

- Approved use-case documentation

For example:

Prompt ID | Content Type | Version | Owner | Updated |

SEO-01 | Long-form Blog | v2.3 | Content Lead | Jan 2026 |

SM-04 | LinkedIn Carousel | v1.2 | Social Team | Feb 2026 |

This allows:

- Auditability

- Continuous optimization

- A/B testing of prompt structures

- Cross-team training consistency

Advanced teams even measure output performance by prompt version to determine which structural instruction produces higher engagement or better search visibility.

Prompts are assets. Treat them as such.

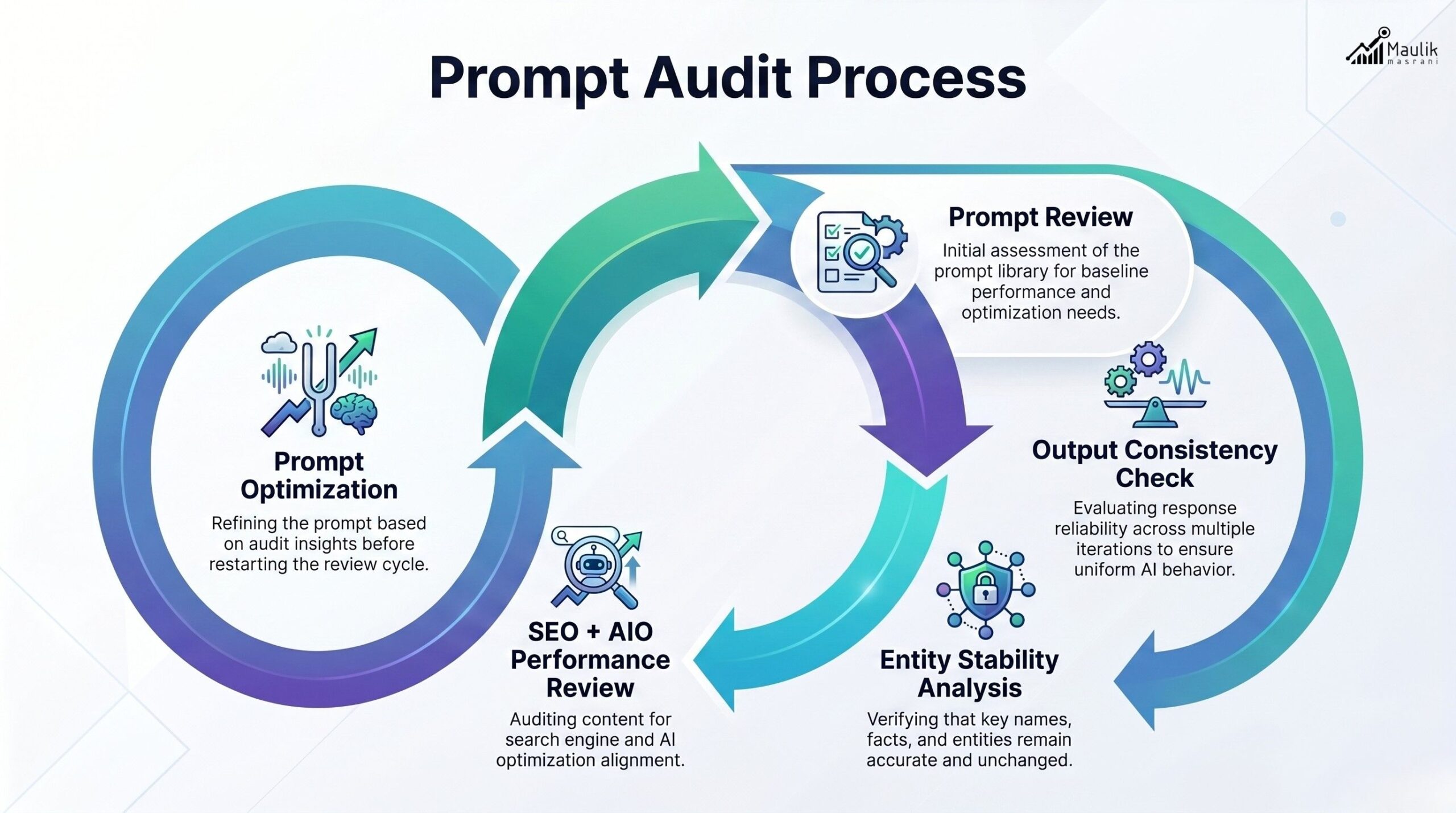

Prompt audit process

Most organizations audit content. Few audit prompts.A quarterly prompt audit should include:

1. Output Consistency Review

- Does the content follow structural constraints?

- Are tone and authority stable?

- Is keyword usage natural?

2. Entity Stability Check

- Are brand terms consistent?

- Is terminology aligned across assets?

3. AI Hallucination Risk Analysis

- Are unsupported claims appearing?

- Are statistics verifiable?

4. Performance Correlation

- Which prompt versions correlate with higher organic visibility?

- Which prompts lead to stronger engagement metrics?

5. Optimization Cycle

- Refine underperforming prompt structures.

- Remove ambiguous instructions.

- Reinforce formatting clarity.

Prompt audits ensure your AI content prompts for marketing teams evolve alongside AI model capabilities.

Without auditing, prompt libraries become outdated.

With auditing, they become strategic infrastructure.

FAQs

Should companies use internal prompt libraries?

Yes. Companies using an internal prompt library experience greater content consistency, lower hallucination risk, stronger brand authority and improved AI visibility alignment. It turns AI usage into a repeatable operational system rather than ad-hoc experimentation.

How do prompt libraries improve SEO performance?

Structured prompts ensure consistent keyword placement, semantic alignment, and FAQ schema inclusion. This increases clarity for search engines and AI models, improving ranking stability and answer engine visibility.

Who should manage a prompt repository?

Typically, a content strategist or AI operations lead manages version control, updates and audits. Ownership ensures governance and prevents uncontrolled modifications.

How often should prompt audits occur?

Quarterly audits are recommended. However, high-volume teams may conduct monthly reviews to optimize performance and adapt to AI model updates.

Conclusion

AI tools have introduced speed and scale to content production, but without structure, they often produce inconsistent results. An internal prompt library brings order to this process by standardizing how teams interact with AI systems. By organizing prompts by content type, enforcing entity-safe rules and maintaining version control, marketing teams can ensure that every piece of AI-generated content aligns with brand messaging, SEO goals and operational governance.

More importantly, structured prompting supports long-term authority in evolving AIO environments. When prompts are audited and refined regularly, they become strategic assets that improve output quality, protect brand terminology and strengthen search visibility. Teams that build disciplined prompt systems will scale AI content more reliably than those relying on ad-hoc experimentation.