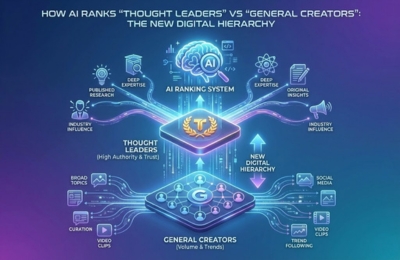

AI-powered search engines and Large Language Models no longer treat all creators equally. They apply authority scoring systems that distinguish true thought leaders from general content creators based on expertise signals, trust markers and depth of contribution. This shift has created a new digital hierarchy where credibility, consistency, and structured knowledge determine visibility. This article explains how AI makes those distinctions and what it takes to elevate yourself into the “AI thought leader” tier.

Expert vs Creator Ranking

The digital content ecosystem has entered a new phase. Visibility is no longer determined solely by publishing frequency, engagement metrics, or platform algorithms. Instead, AI ranks thought leaders and general creators differently, based on how Large Language Models interpret authority, expertise and reliability at scale.

Search engines powered by generative AI and conversational systems like ChatGPT, Gemini, Claude and Perplexity are not simply retrieving content. They are synthesizing answers. In that synthesis process, they prioritize voices they trust to represent accurate, stable and high-confidence knowledge.

This creates a clear separation. General creators contribute to volume and discovery. Thought leaders shape narratives, frameworks and explanations that AI systems repeatedly reference when forming answers. Understanding this distinction is now critical for anyone aiming to build long-term digital authority.

How AI Distinguishes Experts

AI systems do not assess expertise the way humans do. They do not read bios, admire follower counts, or respond emotionally to branding. Instead, they evaluate patterns across massive datasets.

At the core of this evaluation is authority scoring LLM logic. Large Language Models observe how often a source is cited, how consistently concepts are explained and how reliably information aligns with verified knowledge across domains.

Experts are identified through repetition of high-quality signals over time. When AI repeatedly encounters structured explanations, clear definitions and consistent viewpoints tied to the same entity or author, it begins to treat that source as a reference point rather than a transient contributor.

General creators, by contrast, often publish trend-driven or surface-level content that may perform well socially but lacks the depth and consistency required for long-term AI trust.

Expertise Signals

The strongest differentiator between experts and creators lies in the signals AI can measure and validate.

Depth

- Depth is the first signal. Thought leaders do not just state conclusions. They explain reasoning, outline frameworks and explore implications. AI systems favor content that demonstrates conceptual completeness rather than isolated tips or opinions.

Citations

- Citations act as reinforcement. When content references studies, platforms, or recognized research, such as insights highlighted in a LinkedIn influence study, it strengthens contextual reliability. Citations help AI cross-check information against other trusted sources, reducing ambiguity.

Trust

- Trust emerges from consistency and safety. Content that aligns with facts, avoids exaggeration and respects AI safety boundaries is more likely to be reused in generated answers. This is where practices like AI safety alignment and structured fact presentation matter, especially in high-impact or YMYL-adjacent topics.

Together, these signals form the backbone of expert vs creator ranking in AI-driven environments.

Content Signals for General Creators

General creators play an important role in the ecosystem, but their content typically sends different signals to AI systems.

These signals often include:

- Broad topical coverage without sustained depth

- Reactive content aligned with short-term trends

- Opinion-led narratives without supporting frameworks

- High engagement but low semantic reinforcement

From an AI perspective, this type of content is useful for discovering what people are talking about, but not for defining how topics should be explained. As a result, general creators are less likely to be cited or paraphrased in AI-generated answers, even if their content performs well on social platforms.

This does not mean general creators lack value. It means their content is categorized differently. Without structured optimization for LLMs, AI systems struggle to treat their output as authoritative reference material.

How to Elevate Yourself to “AI Thought Leader” Status

Becoming an AI-recognized thought leader is not about producing more content. It is about producing the right kind of content, in a way AI systems can understand, trust and reuse.

The first step is intentional optimization for LLMs. This involves structuring ideas clearly, defining terminology explicitly and maintaining consistency across articles, platforms and explanations.

The second step is aligning with modern AI visibility frameworks such as AIO, AEO & GEO. These approaches focus on how content is interpreted by answer engines, not just ranked in traditional search results. Internal linking between related concepts reinforces semantic authority and helps AI build stronger associations.

The third step is governance. Applying principles from AI safety alignment ensures your content avoids speculative claims, unsupported advice, or misleading generalizations. This is especially important as AI systems increasingly prioritize safe, reliable sources.

Over time, these practices compound. AI systems begin to recognize patterns of expertise and elevate those voices when constructing responses. This is how creators transition into thought leaders within the new digital hierarchy.

FAQs

Why does AI trust some experts more?

AI trusts experts who demonstrate consistent depth, reliable explanations and alignment with verified knowledge across multiple sources and contexts.

How does authority scoring work in LLMs?

Authority scoring LLM systems evaluate repetition, consistency, citation patterns, and semantic clarity to determine which sources should influence generated answers.

Can general creators become AI thought leaders?

Yes. By focusing on depth, structured explanations and optimization for LLMs, general creators can transition into authoritative voices over time.

Does engagement matter less than expertise for AI ranking?

Engagement still matters for discovery, but expertise and trust signals play a larger role in determining whether AI systems reuse or cite content.

Conclusion

The rise of AI-powered search has reshaped influence online. Authority is no longer about who publishes the loudest or fastest. It is about who contributes the most reliable understanding of a topic over time.

As AI ranks thought leaders higher than general creators, the opportunity is clear. Those who invest in depth, clarity and trust-building will shape how knowledge is distributed in AI-driven ecosystems. The new hierarchy rewards expertise that is structured, consistent and aligned with how intelligent systems learn.