As AI systems increasingly shape brand perception, enterprises must move beyond traditional marketing compliance and adopt structured generative governance. This means implementing an enterprise-ready AI governance model that controls risk, approval flows, version tracking, and AI representation monitoring. Without it, organizations lose narrative control across Google and LLMs. With it, they gain scalable, measurable enterprise AIO control that protects reputation and improves AI visibility consistency.

Generative Content Governance

AI systems now summarize, cite, recommend and interpret brand content without asking permission. Google’s generative results, ChatGPT-style assistants, and enterprise copilots shape how customers perceive your company before they visit your website.

This shift demands a new discipline: generative governance. Unlike traditional content governance (focused on brand tone, compliance, and publishing workflows), generative governance ensures:

- AI systems represent your organization accurately

- High-risk claims are controlled

- Entity data remains consistent

- Version changes are traceable

- AI-generated outputs align with corporate policy

At enterprise scale, this becomes less about content creation and more about system-level oversight.

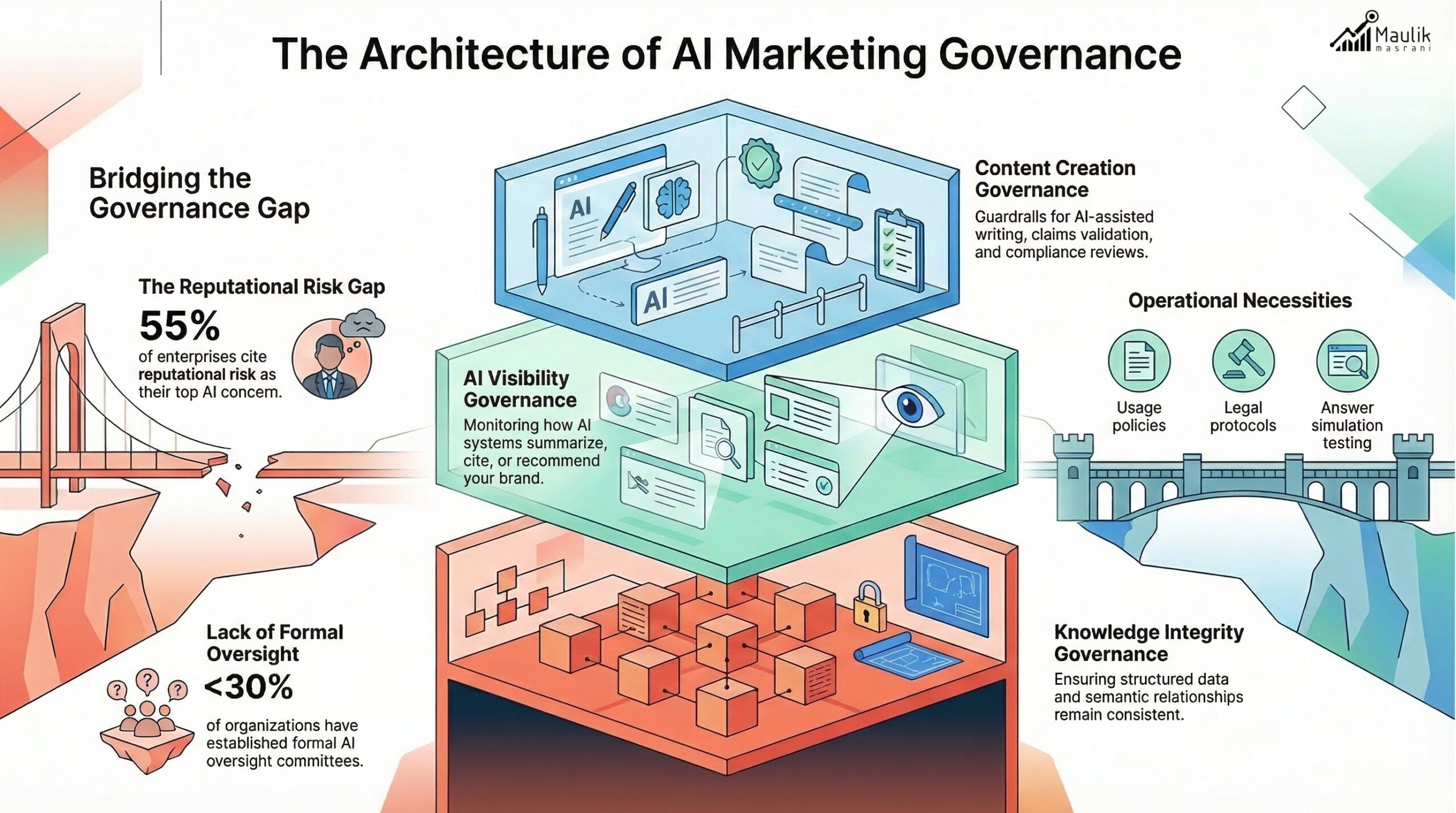

What is AI governance in marketing?

AI governance in marketing refers to the structured policies, processes and technologies that control how AI-generated and AI-interpreted content represents a brand.

It operates across three layers:

Content Creation Governance

Guardrails for AI-assisted writing, claims validation, and compliance reviews.

AI Visibility Governance

Monitoring how AI systems summarize, cite, or recommend your brand.

Knowledge Integrity Governance

Ensuring structured data, entity references and semantic relationships remain consistent.

According to industry surveys, over 55% of enterprises cite reputational risk as their top AI concern. Yet fewer than 30% have formal AI oversight committees.

That gap is where generative governance becomes critical.

An effective AI governance model includes:

- AI content usage policies

- Legal sign-off protocols

- Structured metadata validation

- AI-answer simulation testing

- Escalation procedures for misrepresentation

This is not a compliance add-on. It is an operational necessity.

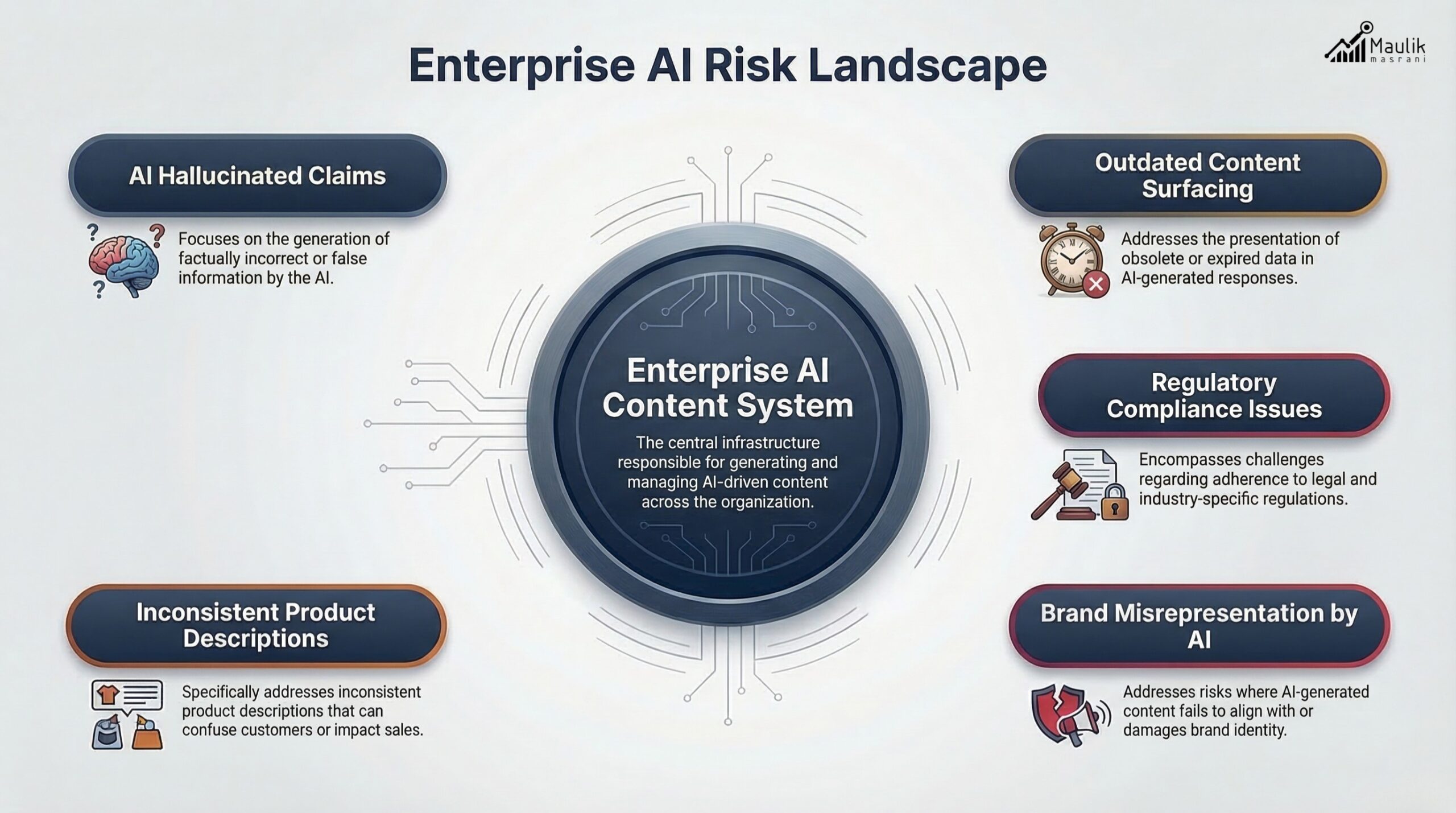

Risk management in AIO

Artificial Intelligence Optimization (AIO) introduces unique enterprise risks:

- Hallucinated claims attributed to your brand

- Outdated information surfaced by AI

- Inconsistent product descriptions across channels

- Regulatory violations due to uncontrolled generative content

- Misalignment between AI summaries and official messaging

Without governance, scale amplifies risk.

A structured enterprise AIO control layer mitigates exposure through:

1. Claim Validation Protocols

Every high-impact claim must be:

- Fact-checked

- Timestamped

- Linked to authoritative sources

- Logged in an internal validation registry

2. AI Simulation Testing

Before publishing critical content:

- Test prompts across major LLM platforms

- Document how the brand is summarized

- Identify distortions or gaps

3. Regulatory Alignment

Industries like finance, healthcare and enterprise SaaS face strict disclosure rules. Generative outputs must be audited for:

- Misleading phrasing

- Missing disclaimers

- Unauthorized performance claims

Generative governance reduces “AI drift”—the gradual distortion of brand representation over time.

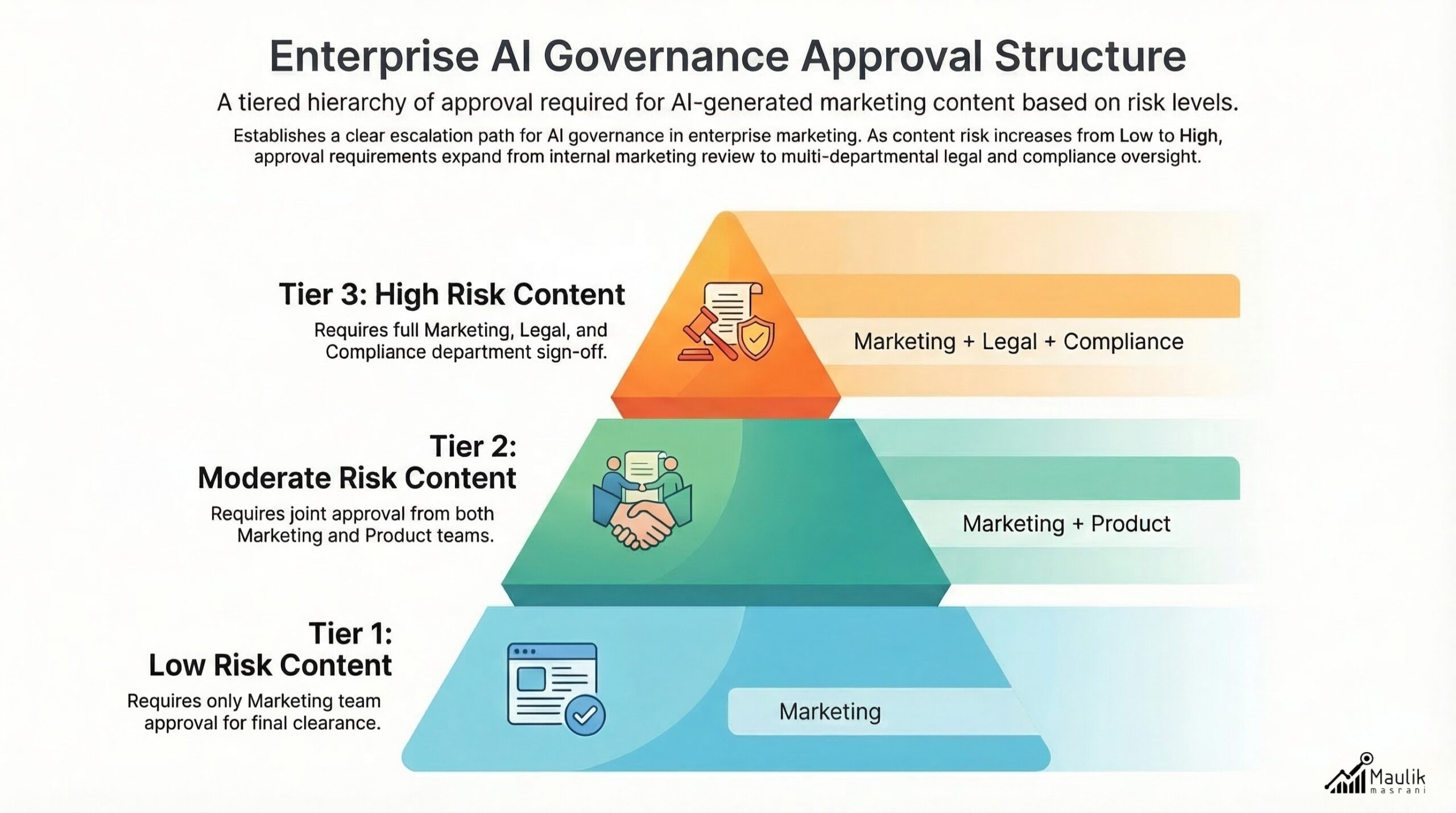

Approval hierarchies

At scale, governance collapses without clear ownership.

Enterprises must define structured approval hierarchies across:

- Marketing

- Legal

- Compliance

- Data Governance

- Executive Leadership

A recommended structure:

Tier 1 – Low-Risk Content

Operational blogs, educational resources

→ Marketing approval only

Tier 2 – Moderate Risk

Product updates, strategic messaging

→ Marketing + Product review

Tier 3 – High Risk

Performance claims, regulated messaging

→ Marketing + Legal + Compliance sign-off

This hierarchy must integrate into your content management system and AI workflow tools.

Without documented approval trails, organizations cannot defend or trace generative outputs during audits.

Version control & historical tracking

AI systems may reference archived pages or cached content. If version history is unmanaged, legacy claims can resurface.

Effective generative governance includes:

- Structured version logging

- Timestamped change histories

- Change rationale documentation

- Archived schema validation

- Content deprecation tracking

This allows enterprises to:

- Demonstrate compliance evolution

- Identify outdated AI citations

- Provide corrective updates

Version governance is not optional in enterprise environments; it is foundational.

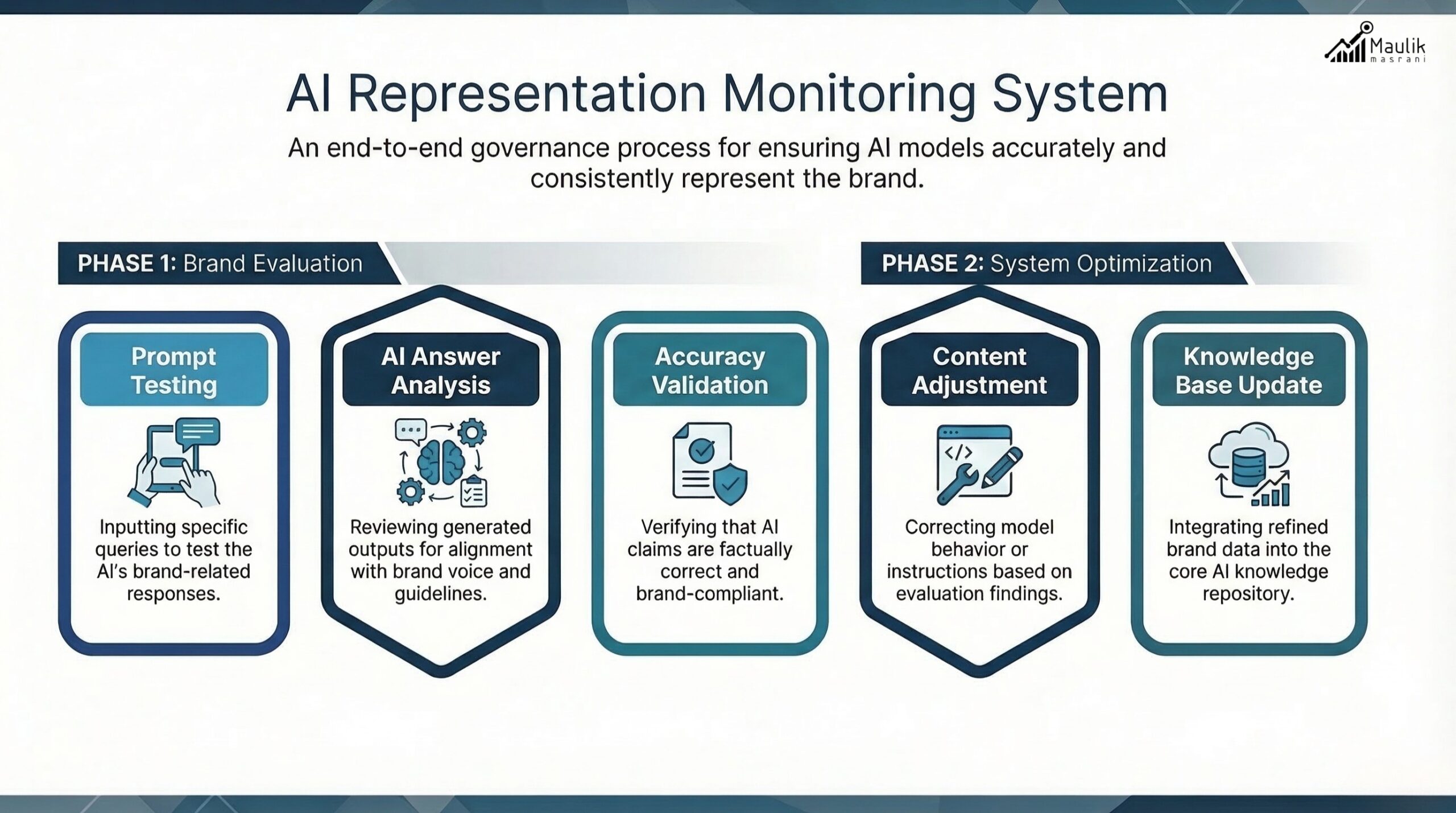

Monitoring AI representation

The most overlooked governance layer is monitoring how AI systems represent your organization externally.

This requires continuous AI representation audits:

1. Prompt-Based Testing

Run recurring prompts such as:

- “What does [Brand] specialize in?”

- “Compare [Brand] with competitors.”

- “What are the risks of using [Brand Product]?”

Document:

- Factual accuracy

- Omitted services

- Incorrect positioning

- Competitive bias

2. Entity Alignment Checks

Ensure:

- Official product names are consistent

- Executive titles match the current structure

- Structured data is aligned across web properties

3. Competitive Sentiment Drift

AI systems learn from public discourse. Enterprises must monitor:

- Citation patterns

- Brand authority strength

- Narrative positioning shifts

This transforms governance from reactive compliance into proactive strategic oversight.

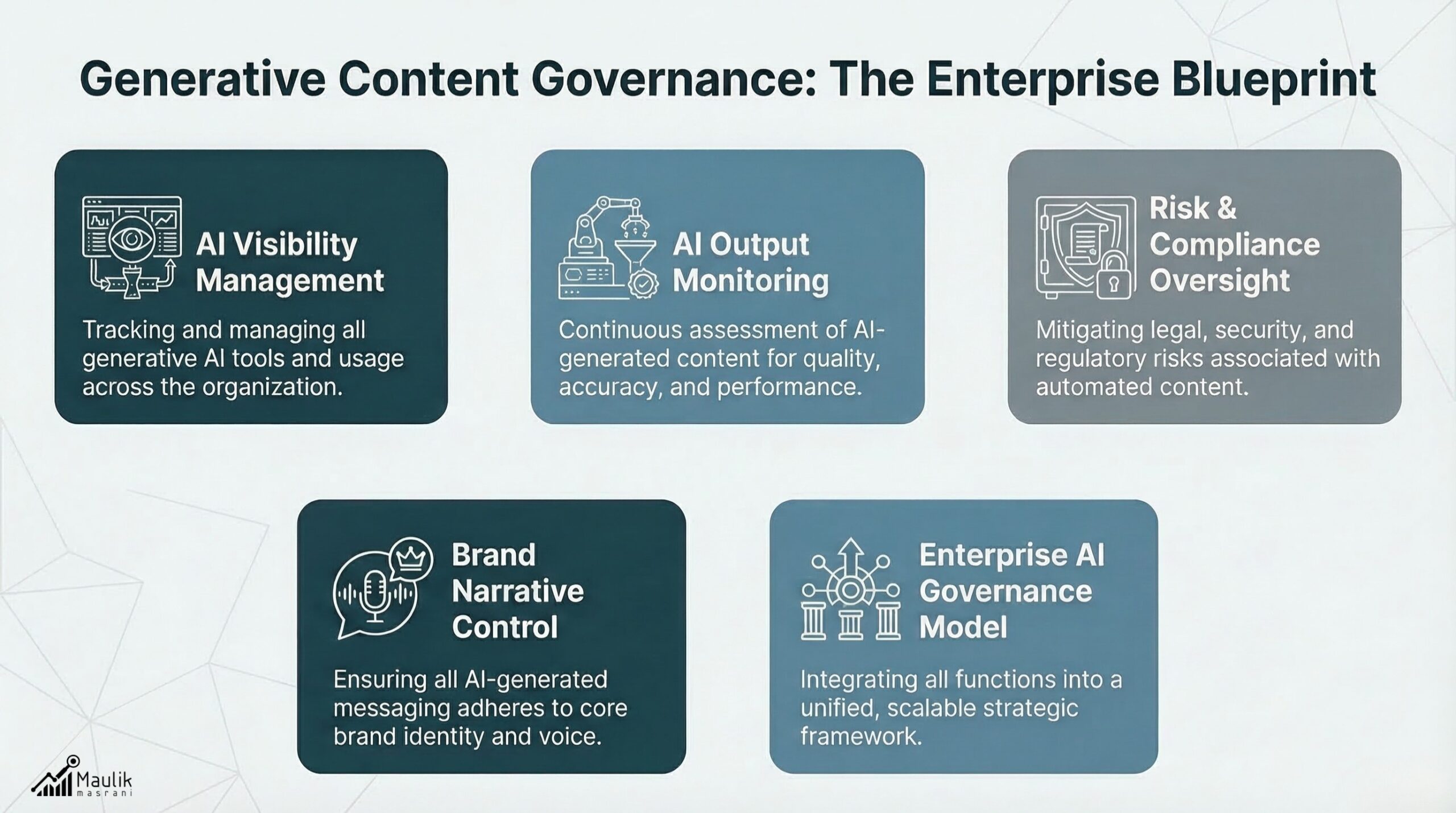

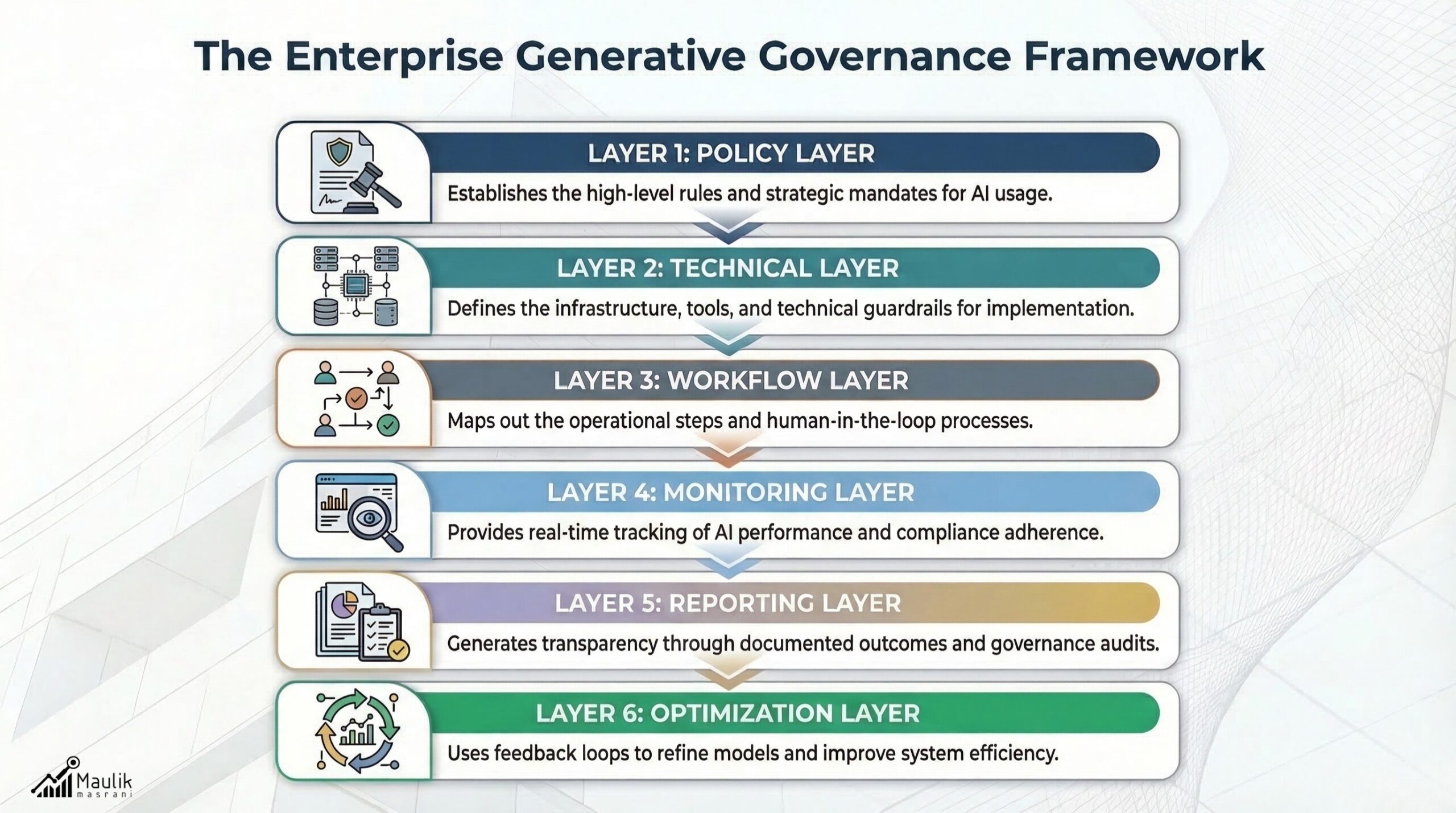

Governance framework model

A scalable AI governance model for enterprise AIO includes six pillars:

1. Policy Layer

Defines:

- AI usage permissions

- Risk thresholds

- Escalation workflows

2. Technical Layer

Includes:

- Schema validation

- Metadata consistency checks

- Structured content templates

3. Workflow Layer

Defines:

- Approval hierarchies

- Review checkpoints

- Audit documentation

4. Monitoring Layer

Continuous:

- AI prompt simulations

- Representation analysis

- Competitor comparisons

5. Reporting Layer

KPIs may include:

- AI citation accuracy rate

- Entity consistency score

- AI representation deviation index

- Governance incident frequency

6. Optimization Layer

Refines:

- Knowledge graph alignment

- Internal linking structure

- Structured content reinforcement

This is what true enterprise AIO control looks like, not isolated SEO tactics, but a systemic governance engine.

FAQs

What is AIO governance?

AIO governance is the structured oversight of how AI systems create, interpret, and represent enterprise content. It combines policy, workflow, technical validation and monitoring processes to ensure accurate AI visibility and risk control.

Why is generative governance important for enterprises?

Generative governance prevents misinformation, regulatory violations, and brand misrepresentation at scale. As AI systems increasingly shape search visibility, governance protects enterprise credibility.

How does an AI governance model differ from traditional content governance?

Traditional governance focuses on human publishing workflows. An AI governance model extends oversight to AI-generated outputs, structured data integrity, AI-answer simulations and external representation monitoring.

What metrics should be tracked in enterprise AIO control?

Enterprises should track AI citation accuracy, entity consistency scores, AI representation deviation rates, governance incident frequency, and regulatory compliance alignment.

Conclusion

In an AI-driven search environment, visibility without control becomes liability. Generative governance gives enterprises the structure to manage risk, maintain narrative consistency, and protect brand integrity at scale. Organizations that treat AI oversight as a strategic function, not a side process, will maintain authority, credibility, and competitive stability in the evolving landscape of enterprise AIO.