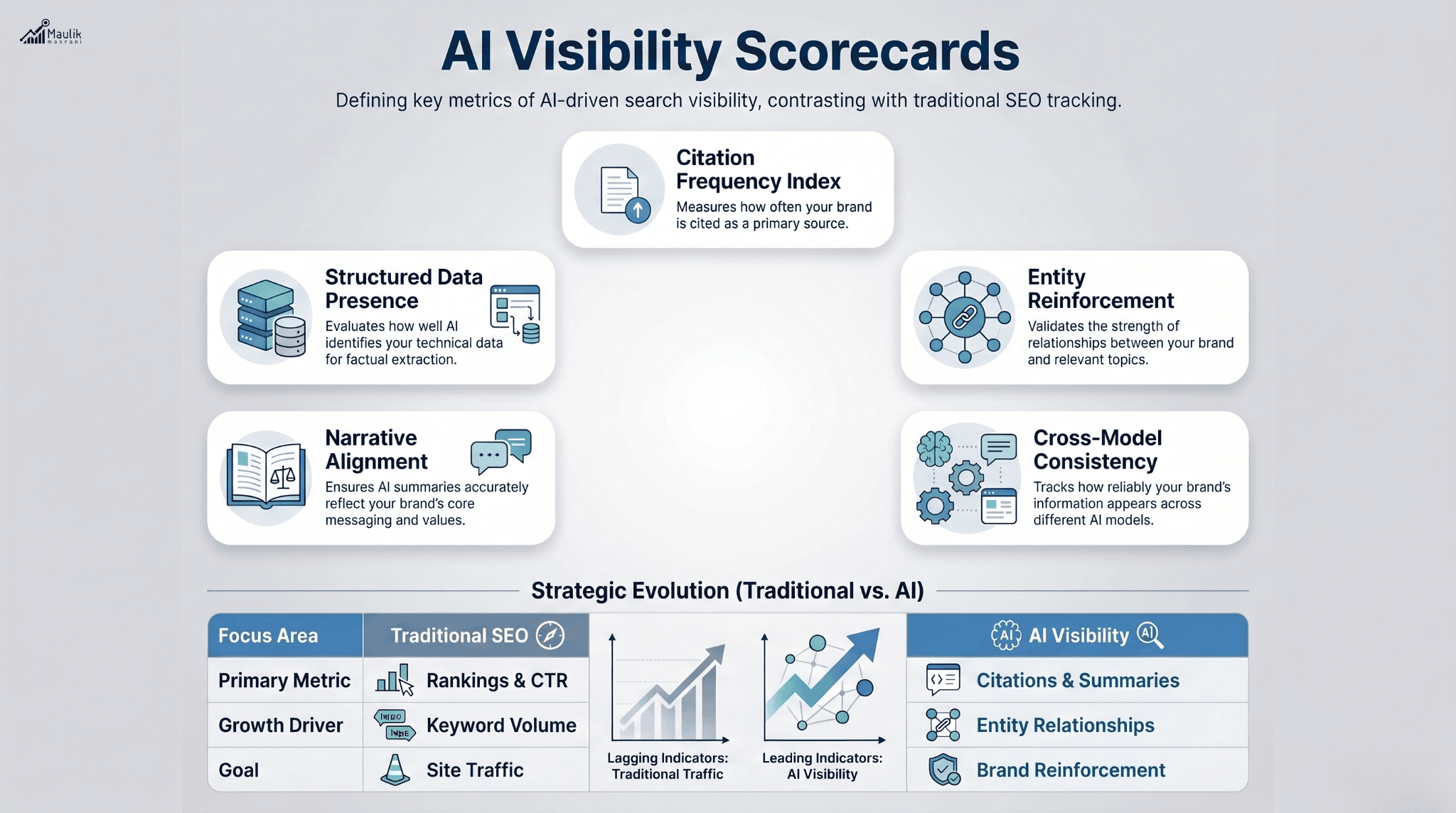

Traditional SEO reports track traffic and rankings, but AI-driven search demands deeper metrics. An AI visibility scorecard standardizes AIO KPI tracking by measuring citation frequency, entity reinforcement and structured performance indicators across AI systems. This guide breaks down the core AI performance measurement framework, introduces quantifiable indices and provides reporting templates to help teams operationalize AIO reporting with precision.

AI Visibility Scorecards

AI search is probabilistic, entity-driven and citation-based. Unlike traditional organic search, where position 1 is clearly measurable, AI-driven visibility operates across generative responses, conversational outputs and summarization layers.

This is where an AI visibility scorecard becomes essential.

Instead of asking:

- “What’s our ranking?”

- “How much traffic did we get?”

You begin asking:

- “How often are we cited in AI responses?”

- “How strongly are our entities reinforced?”

- “Is our narrative consistent across AI systems?”

A standardized scorecard allows marketing leaders, growth teams and executives to measure AIO KPI tracking in a structured, comparable format.

In short, traffic is lagging. AI visibility is leading.

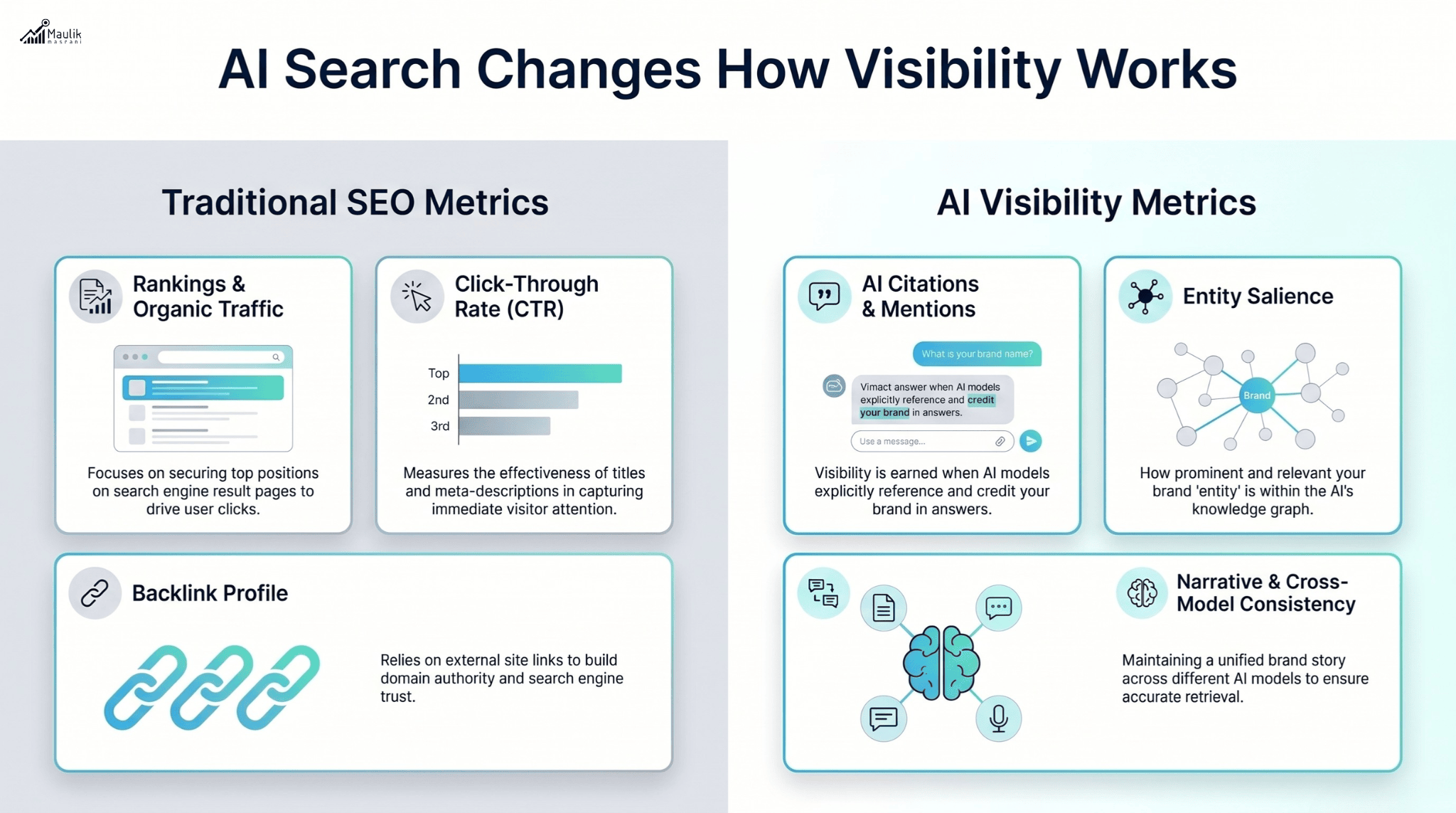

Why Traditional SEO Reports Fail

Most SEO dashboards focus on:

- Keyword rankings

- Organic sessions

- Click-through rates

- Backlink counts

While useful, these metrics fail in AI contexts for three key reasons:

1. AI Answers Replace Clicks

Generative systems summarize answers directly. Your brand may be cited without generating a click. Traditional analytics won’t capture that visibility.

2. Rankings Are Non-Linear

AI responses are not ranked in a visible list. Visibility becomes probabilistic and contextual.

3. Entity Graphs Matter More Than Keywords

Modern AI models rely on structured entity relationships rather than keyword density. Ranking reports ignore entity strength entirely.

According to industry research on generative search adoption, conversational AI usage has grown exponentially year-over-year, reducing reliance on blue-link search results. That shift demands new AI performance measurement models.

Without a standardized scorecard, organizations operate blindly.

Core AI Metrics to Track

An effective AI visibility scorecard must be metrics-driven, repeatable and comparable over time.

Below are the foundational components of robust AIO KPI tracking:

1. AI Citation Frequency

Measures how often your brand or domain appears in AI-generated responses across selected prompts.

Formula (Monthly):

Brand Citations / Total Relevant Prompt Outputs × 100

If your brand appears in 42 out of 200 tracked prompts:

Citation Rate = 21%

This becomes your baseline visibility index.

2. Entity Salience Weight

Measures how strongly your brand is associated with core topical entities.

Example:

If your brand targets “AI governance,” measure how often AI systems associate your brand with:

- Policy frameworks

- Risk management

- Compliance systems

Higher contextual co-occurrence = stronger entity reinforcement.

3. Cross-Model Consistency Score

Evaluate performance across:

- Multiple generative engines

- Different query types (informational, strategic, comparative)

- Regional language variations

Consistency indicates structural authority.

4. Structured Data Presence

Measure:

- Schema completeness

- FAQ markup presence

- Definition-first clarity

Structured content improves AI extraction probability.

5. Narrative Alignment Index

Analyze whether AI summaries describe your brand using intended positioning language.

For example:

Are you described as:

- “Enterprise authority”

- “Mid-market solution”

- “Emerging tool”

Or misclassified entirely?

Narrative drift reduces strategic control.

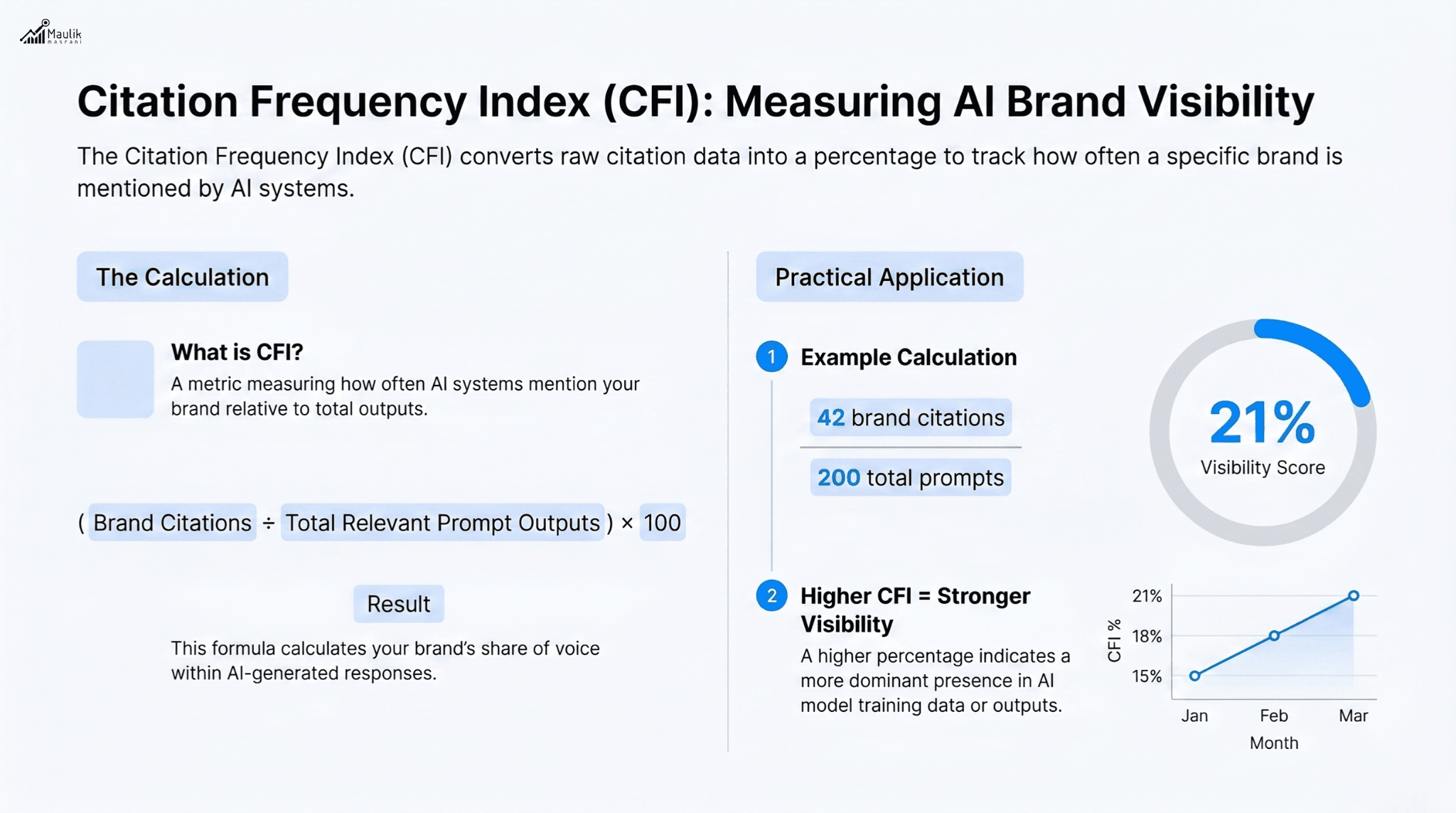

Citation Frequency Index

The Citation Frequency Index (CFI) is the backbone of any AI visibility scorecard.

It answers a simple question:

How often do AI systems mention you?

Step 1: Define Prompt Clusters

Create 50–200 standardized prompts aligned with:

- Core product categories

- Industry comparisons

- Use-case queries

- Strategic advisory questions

Step 2: Run Across Models

Evaluate output from multiple AI engines monthly.

Step 3: Score Mentions

Assign weighted scores:

- Direct brand citation: 2 points

- Indirect reference (URL or product mention): 1 point

- No mention: 0

Step 4: Normalize Score

Convert into a percentage index for monthly benchmarking.

Example:

Month | Total Prompts | Citations | CFI |

Jan | 100 | 18 | 18% |

Feb | 100 | 26 | 26% |

An 8% increase signals a stronger AI authority.

Unlike keyword ranking volatility, CFI reveals strategic trajectory.

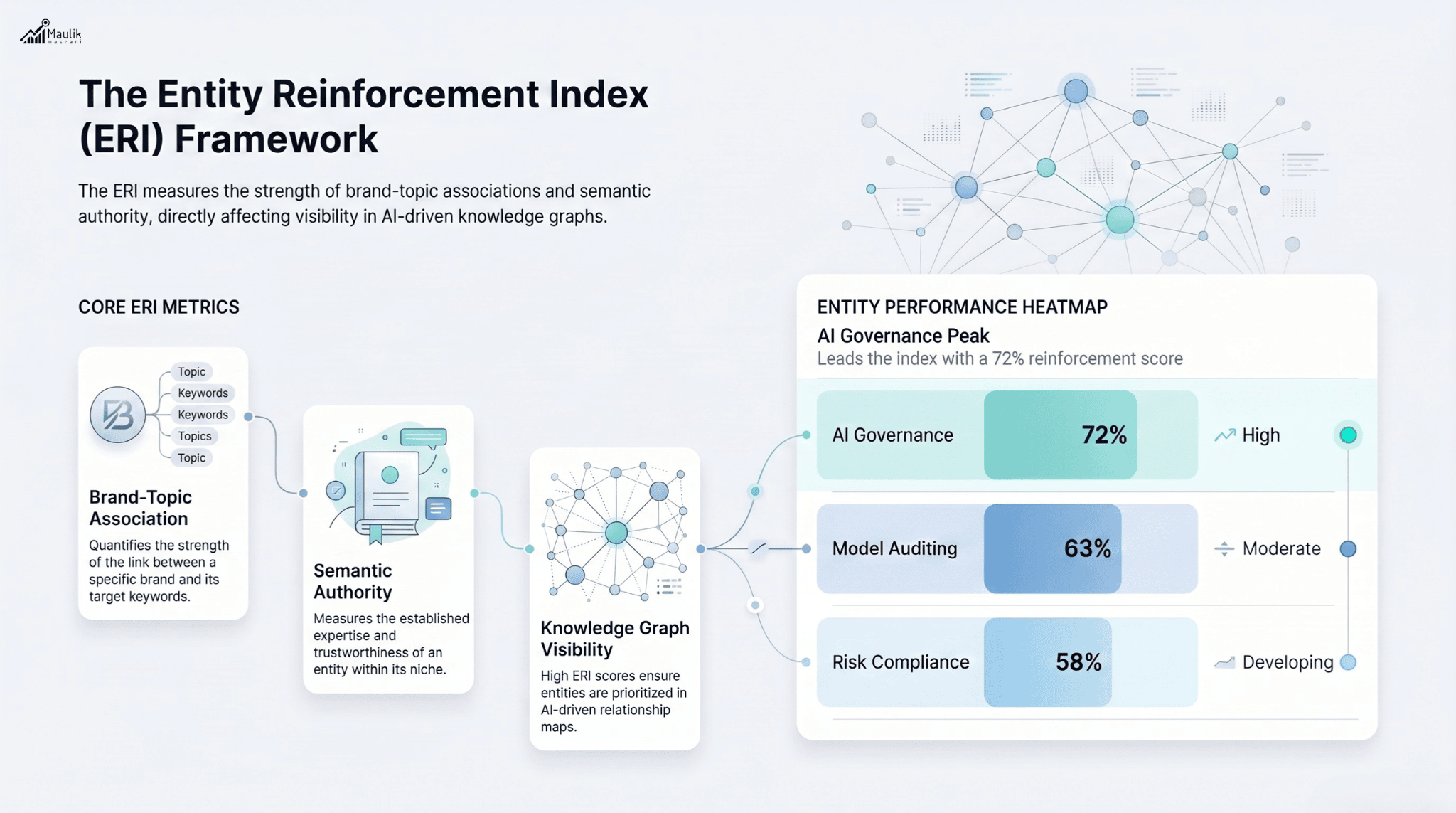

Entity Reinforcement Index

If Citation Frequency measures presence, Entity Reinforcement measures depth.

The Entity Reinforcement Index (ERI) evaluates:

- Entity density within AI summaries

- Correct topical alignment

- Repetition of brand-topic association

Measurement Method

- Identify 10–15 core entities central to your positioning.

- Track co-occurrence frequency with brand mention.

- Score on a weighted average scale.

Example:

Entity | Brand Co-Occurrence % |

AI Governance | 72% |

Risk Compliance | 58% |

Model Auditing | 63% |

Average ERI = 64.3%

Higher ERI means stronger semantic embedding in AI knowledge graphs.

Without entity reinforcement, citations remain shallow and unstable.

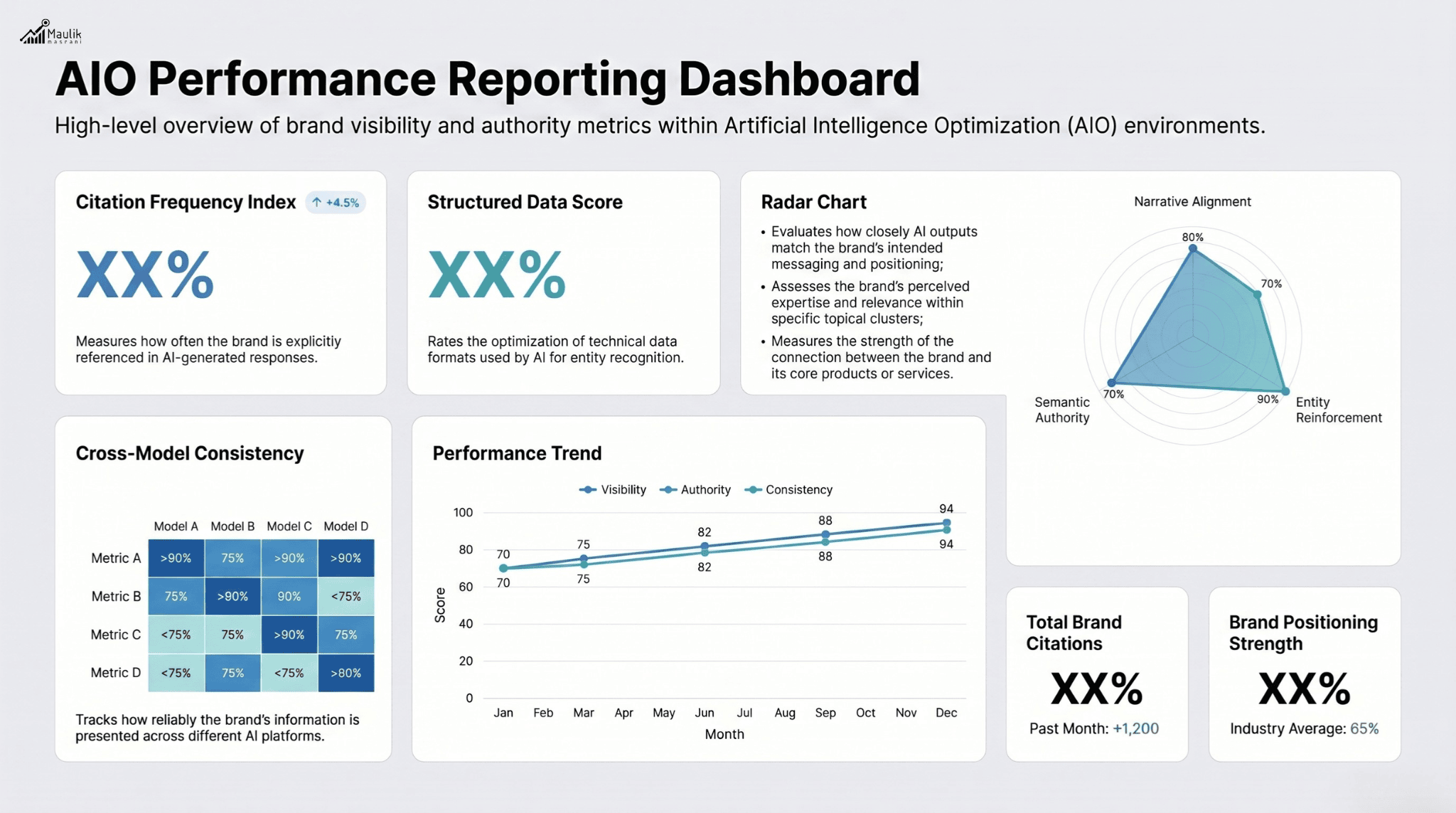

Reporting Templates

Standardization is critical.

An effective AI visibility scorecard reporting template should include:

Executive Summary Section

- Total Citation Frequency Index

- Entity Reinforcement Index

- Cross-Model Consistency Score

- Month-over-Month Change

Detailed Metrics Section

- Citation Breakdown

- By prompt category

- By model

- By region

- Entity Mapping Table

- Core entity

- Reinforcement score

- Gap analysis

- Narrative Drift Review

- Intended positioning

- AI-described positioning

- Alignment score

Visualization Layer

Include:

- Heat maps for entity strength

- Trend graphs for citation growth

- Radar charts for cross-model comparison

Executives need clarity. Operators need depth.

Your reporting template must satisfy both.

FAQs

What metrics measure AIO?

The most important metrics for AIO KPI tracking include Citation Frequency Index, Entity Reinforcement Index, Cross-Model Consistency Score and Narrative Alignment Index. These measure AI performance beyond traffic and rankings.

How often should AI visibility scorecards be updated?

Monthly tracking is ideal. High-growth organizations may conduct bi-weekly audits during active optimization cycles.

Can AI visibility exist without website traffic?

Yes. AI systems can cite and summarize your brand without generating clicks. That visibility still builds authority and brand reinforcement within AI ecosystems.

Is traditional SEO still relevant?

Yes, but it must complement AI visibility measurement. Traffic remains important, but it is no longer the sole indicator of digital authority.

Conclusion

AI visibility is becoming a critical performance layer in modern search ecosystems. Traditional SEO metrics alone can no longer explain how brands appear, influence, or reinforce authority inside AI-generated responses. A structured AI visibility scorecard gives organizations a measurable framework to track citations, entity relationships, and narrative consistency across AI systems.

As AI-driven discovery continues to evolve, brands that standardize AIO KPI tracking early will gain stronger semantic authority and long-term visibility advantages. The future of digital reporting will not be defined only by clicks and rankings, but by how effectively AI systems recognize, reference, and trust your brand.