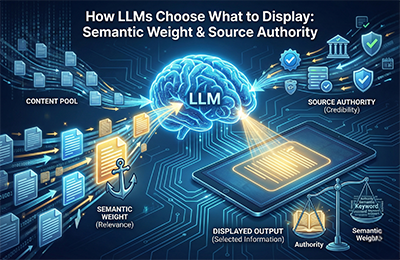

Large Language Models select which sources to display based on semantic authority, meaning how well your content aligns with the question, how clearly your entities are defined and how trustworthy your domain appears in the broader web ecosystem.

LLMs compare semantic patterns, entity clarity and structural quality before generating an answer. Strong metadata, stable signals across the web and consistent topical authority raise your chances of being referenced in AI outputs across ChatGPT, Gemini, Claude and Perplexity.

How LLMs Choose Sources

When an LLM decides which sources to surface or synthesize, it isn’t performing traditional search-ranking mathematics. Instead, it evaluates semantic authority, entity relevance and overall source trust to determine which websites, documents, or datasets align most closely with a user query.

Unlike search engines that return URLs, LLMs return answers and answers depend on weighted trust patterns in their training data and retrieval systems. The OpenAI System Cards describe this as a combination of pattern recognition, safety filtering and reinforcement-driven signal preference.

In simple terms: LLMs pick the sources that feel the most consistent, clear, authoritative and semantically aligned with the question at hand.

Semantic Matching Signals

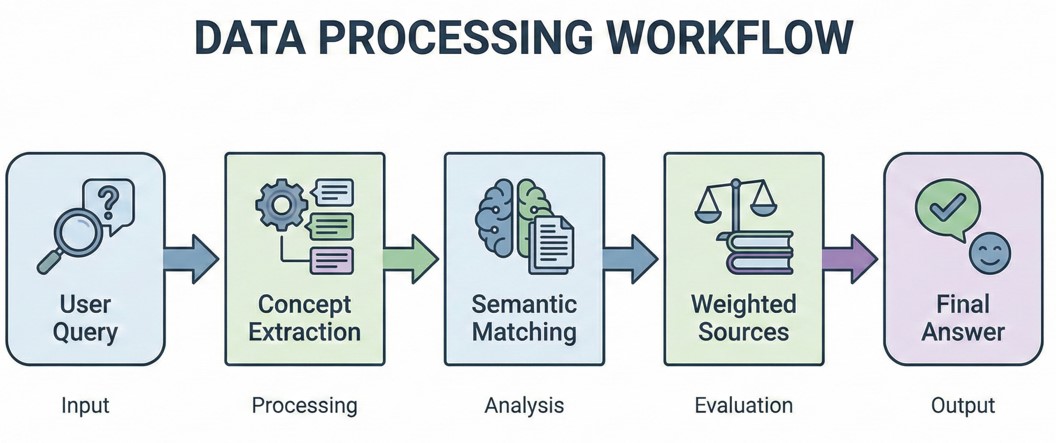

Semantic matching is the first checkpoint. The LLM compares the query against billions of patterns, looking for conceptual alignment rather than keyword density.

Simple Diagram

Semantic matching examines:

- Topical alignment (Are you covering the exact concept?)

- Depth relevance (Do you go beyond surface-level explanations?)

- Context quality (Do your examples, diagrams and analogies match user intent?)

- Consistency across the web (Do third-party sources describe your content the same way your site does?)

This is where the primary keyword semantic authority LLM subtly influences selection content that demonstrates clear conceptual depth on key entities and topics is more likely to be weighted closer to the output layer.

Entity Strength & Clarity

Entities are the backbone of LLM reasoning. When an LLM detects well-structured entities tied to your brand products, services, or subject matter, it gains confidence in your source.

Entity relevance is influenced by:

- How often does your brand appear consistently across the web

- Whether your brand is associated with specific topics

- Presence of structured data and schema

- Clarity of names, roles and relationships between topics

- How your content defines, explains and contextualizes your expertise

If an entity is fuzzy, inconsistent, or appears contradictory across platforms, LLMs reduce its weighting.

This means brands with strong topical identity supported by schema often outrank larger but less coherent competitors within LLM-driven answers.

Importance of Source Authority

Source authority is not the same as traditional domain authority. LLMs prioritize trust signals accumulated during training and reinforced through retrieval.

Authority is determined by:

- Historical reliability of the source

- How often do other trusted datasets reference that source

- Clear authorship, citations and transparent structure

- Accuracy patterns detected over time

- Alignment with widely accepted knowledge graphs

Why Some Sites Are Favored

A site with moderate SEO authority but high semantic precision and strong topical consistency may be treated as more trustworthy than a high-domain-authority site with mixed or inconsistent content.

This is where content weighting becomes critical. LLMs assign internal scores that influence how likely your content is to appear in generative answers.

Metadata & Structure Impact

Metadata is not just for SEO; it directly improves semantic clarity for AI systems.

LLMs benefit from:

- Clean H1–H3 hierarchy

- Schema types like BlogPosting and FAQPage

- Logical paragraph segmentation

- Alt text describing real entities

- Internal links that establish contextual relationships

Even simple formatting creates clarity. Structured content reduces ambiguity, which increases the probability of your site appearing in generative answers.

Case Example: Two Brands, Different Authority

Imagine two brands publishing content on the same topic.

Brand A

- Clean content structure

- Strong metadata

- Clear entity usage

- Consistent explanations across social profiles, blogs and external references

- Publishes deeply researched articles with diagrams

Brand B

- High domain authority

- Content includes mixed terminology

- Entities are described differently across pages

- Shallow coverage of the topic

- Lacks a structured schema

LLM Outcome

Brand A is far more likely to be chosen by the LLM even if traditional SEO metrics place Brand B higher in search rankings.

Why?

Because Brand A demonstrates semantic authority, clear entity positioning and predictable trust signals. LLMs prefer sources with lower ambiguity, higher precision and stable reference alignment.

This is why improving entity design and structured metadata can dramatically shift your brand’s visibility inside generative search environments.

FAQs

Q1: How does AI choose sources?

AI models select sources based on semantic alignment, entity clarity and trust signals derived from training data, structured metadata and consistent topical authority.

Q2: Why does AI trust some websites more than others?

LLMs trust websites that show stable accuracy patterns, strong entity consistency, clean structure and authority signals reinforced across multiple datasets and references.

Q3: How can a website increase its semantic authority with LLMs?

By improving entity clarity, adding structured schema, publishing deep topical content and maintaining consistent terminology across all platforms.

Q4: Does domain authority matter in LLM selection?

Yes, but only indirectly. Semantic quality, structured content, and trust signals often outweigh traditional SEO metrics in generative AI systems.