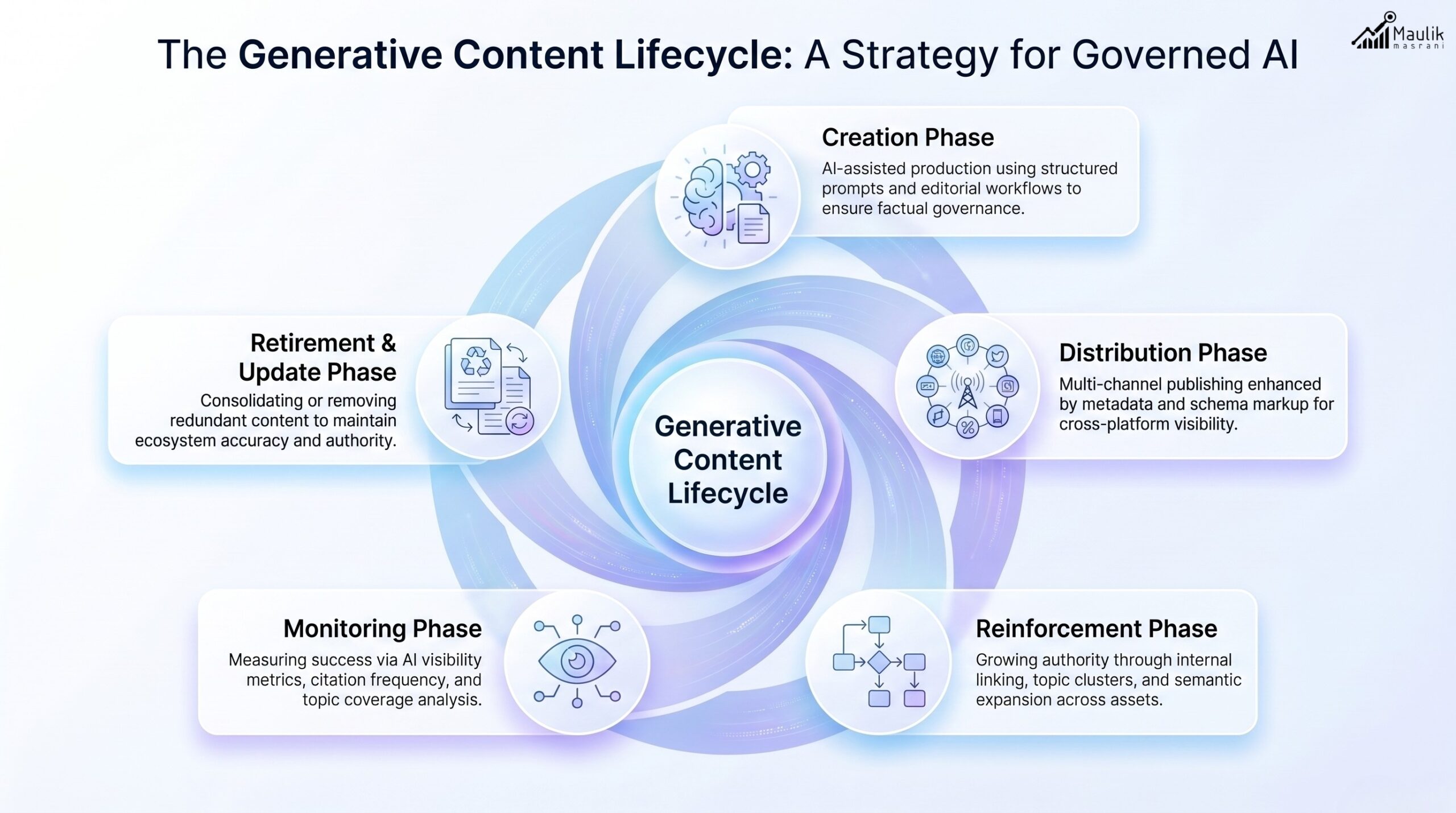

The generative content lifecycle is no longer linear; it is dynamic, iterative and AI-governed. Organizations that adopt a structured AI content lifecycle model across creation, distribution, reinforcement, monitoring and retirement phases achieve higher AI visibility, stronger compliance and measurable ROI. This guide outlines a maturity-based framework for operationalizing AIO governance across the full lifecycle of generative content.

Generative Content Lifecycle

The AI era has fundamentally reshaped how content is produced, distributed and evaluated. Traditional editorial workflows assumed predictable publishing cycles. Generative systems powered by large language models and AI-driven search operate differently.

Content now:

- Evolves continuously

- Gains visibility across AI engines

- Influences entity authority over time

- Requires structured oversight

The generative content lifecycle recognizes this shift. Instead of viewing content as a one-time asset, it treats it as a governed digital entity with measurable stages and maturity checkpoints.

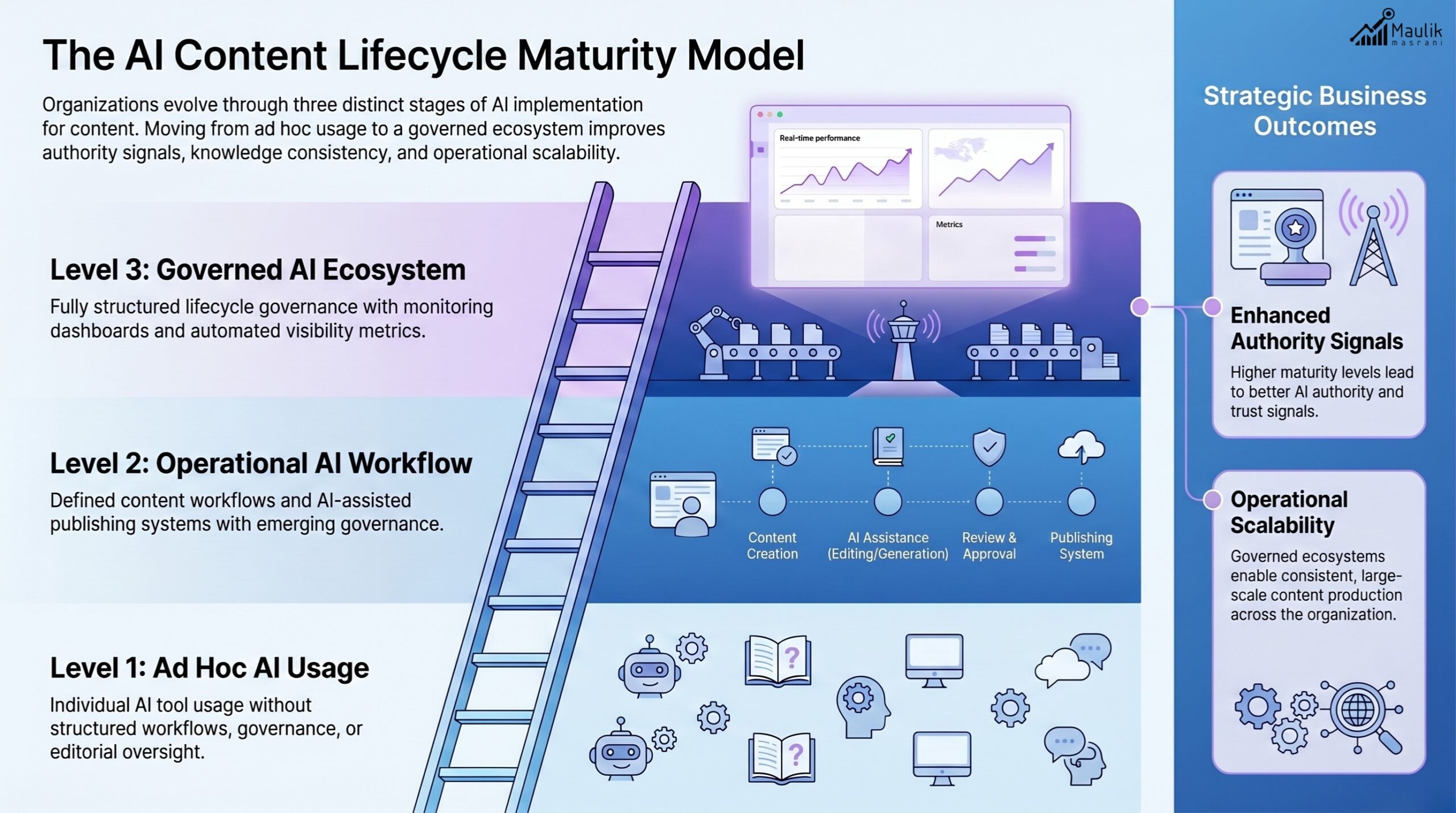

Organizations typically move through three lifecycle maturity levels:

- Ad Hoc AI Usage – AI tools used without structured oversight

- Operational AI Workflow – Defined content pipelines but limited governance

- Governed AI Ecosystem – Lifecycle automation, monitoring systems and executive oversight

Let’s break down each phase.

Creation Phase

The creation phase is where the AI content lifecycle model begins. However, maturity here is not about speed; it is about structure.

Governance Priorities in Creation

- Definition-first writing approach

- Entity alignment and semantic consistency

- Source validation and citation integrity

- Brand voice calibration

- Prompt documentation

According to industry AI adoption research, over 60% of organizations using generative AI lack formal documentation standards. This increases factual drift and brand inconsistency.

A mature creation phase includes:

- Controlled prompt libraries

- Structured templates

- Fact-check workflows

- AI + human editorial checkpoints

At Level 1 maturity, teams focus on output volume.

At Level 3 maturity, teams focus on AI citation reliability and entity clarity.

Governance transforms content creation from reactive publishing into a predictable authority-building engine.

Distribution Phase

Publishing is no longer just about pushing content live.

The distribution phase determines how AI systems ingest, interpret and amplify your content.

Distribution in the AI Era Includes:

- Structured schema implementation

- Metadata enrichment

- Multi-format deployment

- Cross-platform reinforcement

- Semantic linking strategies

Generative engines favor consistent reinforcement across multiple surfaces. A single blog post may influence:

- AI summaries

- Featured snippets

- LLM-generated answers

- Voice search results

In a governed lifecycle, distribution is mapped intentionally.

Maturity Signals:

- Basic Level: Single-channel blog publishing

- Intermediate Level: Blog + social + email repurposing

- Advanced Level: Structured multi-model reinforcement (text, video, summary layers, FAQs)

Organizations practicing structured distribution see improved AI citation frequency over time.

Reinforcement Phase

Reinforcement is where authority compounds.

Unlike traditional SEO models that rely heavily on backlinks, the generative environment values:

- Topical clustering

- Entity reinforcement

- Consistent definitions

- Contextual expansion

The reinforcement phase ensures that a topic is not published once, but built as a knowledge cluster.

For example:

- Core guide

- Supporting articles

- FAQs

- Executive summaries

- Data-backed updates

This layered structure increases semantic trust and AI recall probability.

Governance in Reinforcement

A mature reinforcement model tracks:

- Topic saturation

- Internal knowledge gaps

- Entity alignment across assets

- Cross-channel semantic harmony

Without reinforcement governance, content fragments into isolated assets. With governance, it becomes a knowledge graph.

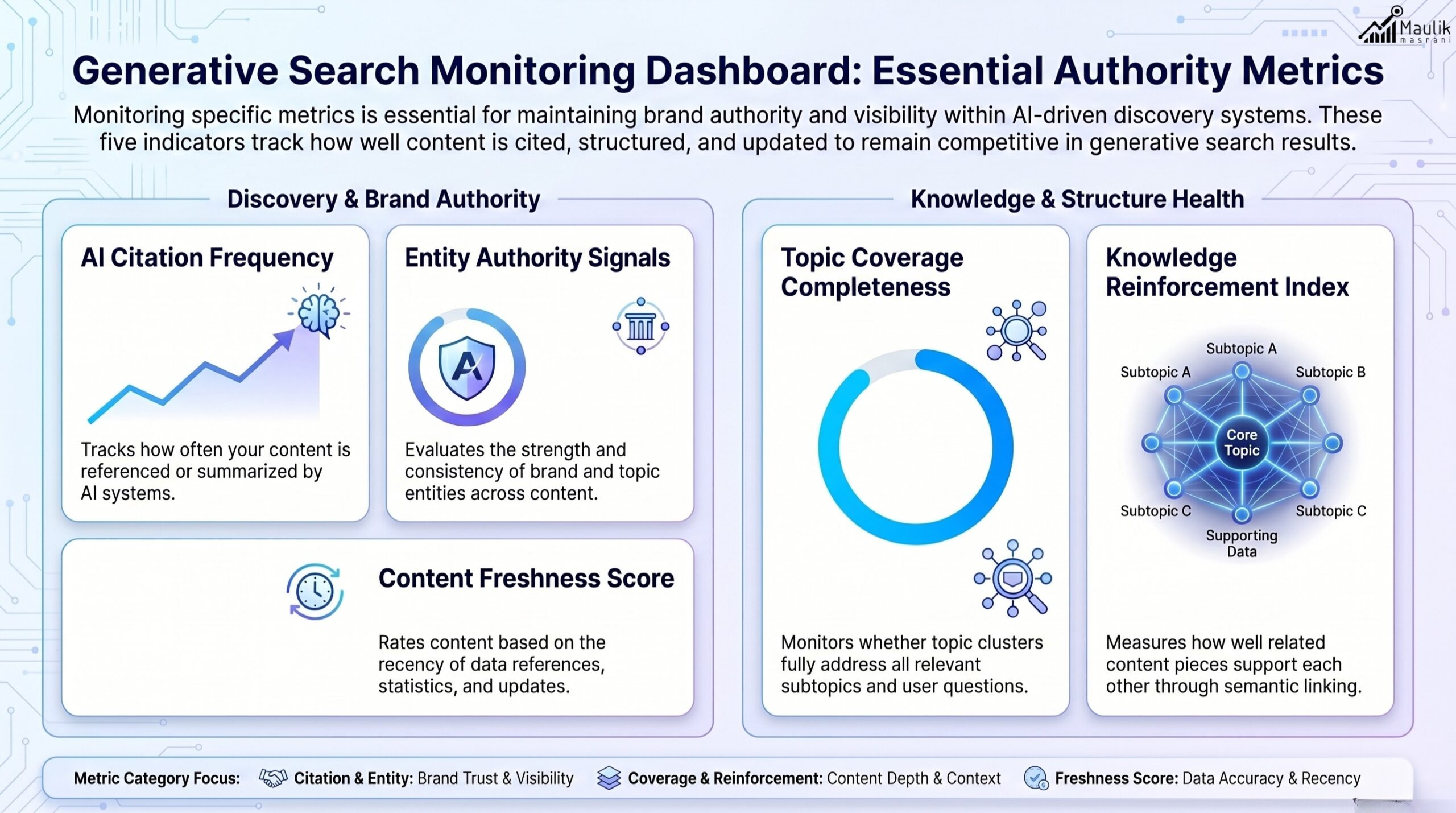

Monitoring Phase

The monitoring phase differentiates AI experimentation from AI maturity.

Governed organizations measure performance beyond traffic.

Monitoring Metrics in a Generative Content Lifecycle

- AI citation frequency

- Entity salience score

- Topic coverage completeness

- Content drift indicators

- Cross-model consistency

Research indicates that AI-generated summaries can change within weeks if reinforcement and monitoring are weak. Monitoring ensures stability.

Maturity Model for Monitoring

Level 1: Traffic-based metrics only

Level 2: Engagement + ranking visibility

Level 3: AI visibility metrics and generative ranking KPIs

Monitoring must include periodic re-validation of:

- Data accuracy

- Regulatory alignment

- Brand messaging consistency

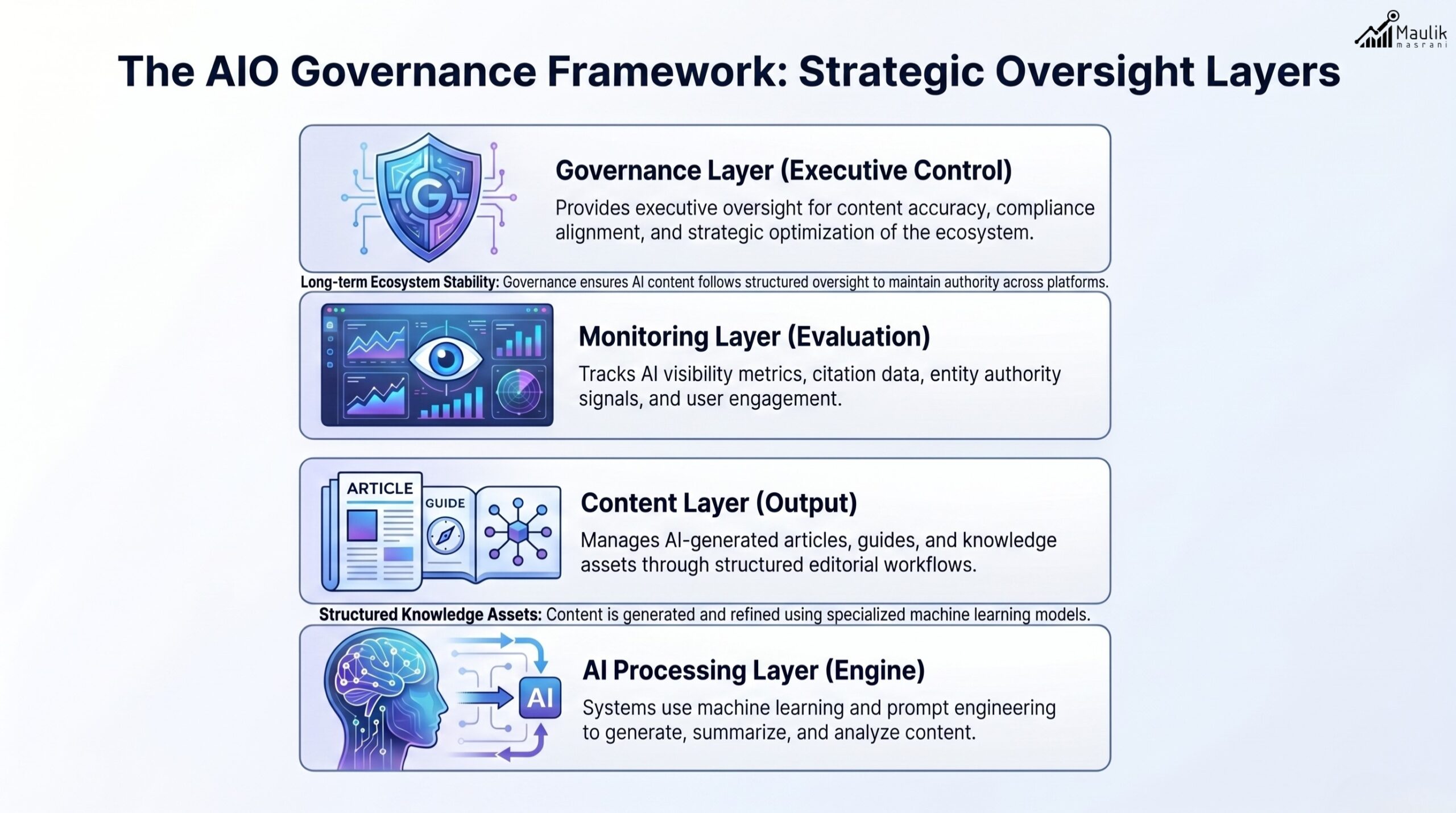

This is where AIO governance becomes critical. Without oversight, generative outputs drift. With governance, authority compounds predictably.

Retirement & Update Phase

In the AI era, outdated content becomes a liability.

Old statistics, obsolete frameworks, or regulatory changes can propagate misinformation across AI engines.

The retirement and update phase ensures lifecycle hygiene.

Governance Controls

- Content freshness audits

- Time-sensitive data flags

- Automated review reminders

- Merge or consolidate overlapping content

- Redirect management

Mature organizations treat retirement as strategic pruning, not deletion.

They identify:

- Underperforming assets

- Redundant pages

- Inaccurate content clusters

Then decide to:

- Update

- Consolidate

- Archive

- Remove

Lifecycle maturity ensures your knowledge base remains clean, accurate and authoritative.

Lifecycle Automation

Automation is the multiplier.

Manual governance works at a small scale. Enterprise-level content ecosystems require automation layers.

Automation Components

- AI-assisted content scoring

- Drift detection systems

- Schema validation automation

- Content update alerts

- Version control tracking

In advanced ecosystems, lifecycle automation connects:

- Editorial systems

- Analytics dashboards

- AI performance monitoring tools

- Executive reporting frameworks

Automation transforms the generative content lifecycle from a workflow into a governance infrastructure.

AI Lifecycle Maturity Model Summary

Maturity Level | Governance Structure | Monitoring Depth | Automation |

Level 1 | Minimal oversight | Traffic metrics | None |

Level 2 | Defined workflow | Ranking + engagement | Partial |

Level 3 | Full AIO governance | AI visibility metrics | Integrated automation |

Organizations operating at Level 3 maturity experience:

- Greater AI citation stability

- Reduced misinformation risk

- Stronger entity authority

- Higher long-term ROI

FAQs

What is AI content lifecycle management?

AI content lifecycle management is the structured governance of generative content across creation, distribution, reinforcement, monitoring and retirement phases to ensure accuracy, consistency and long-term AI visibility.

Why is the generative content lifecycle important?

The generative content lifecycle ensures that AI-produced and AI-indexed content remains accurate, reinforced and aligned with brand and regulatory standards over time.

How does AIO governance fit into the AI content lifecycle model?

AIO governance provides executive oversight, monitoring frameworks and compliance controls that stabilize and scale generative content operations.

What happens if content is not monitored in the AI era?

Without monitoring, content can experience drift, lose AI visibility, propagate outdated information, and damage authority signals.

Conclusion

Generative AI has changed the economics of content, but governance determines sustainability. The generative content lifecycle reframes content from static publication into a governed, evolving digital asset. Organizations that adopt a structured AI content lifecycle model supported by mature AIO governance gain more than visibility. They gain control. In the AI era, control is authority. And authority compounds.