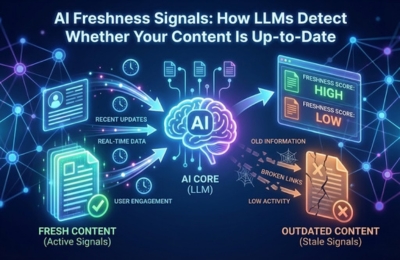

AI freshness signals are how large language models (LLMs) decide whether your content is current, reliable and safe to reuse in answers. Unlike classic SEO freshness, AI systems evaluate update patterns, structural edits, timestamps and external data alignment to assign a “recency confidence.” This blog breaks down how LLMs detect freshness, what signals they actually read, how often updates matter and how to build a repeatable freshness checklist for AIO visibility.

Freshness Signals in AIO

Freshness has quietly become one of the most misunderstood yet critical ranking inputs in Artificial Intelligence Optimization (AIO). As LLM-powered systems increasingly answer questions directly, often without sending traffic to your content’s ability of your content to appear depends on whether the AI trusts it as current.

AI freshness signals are not simply about showing a recent date on a page. They are a composite of behavioral, structural and contextual indicators that help models decide if your information still reflects the present state of reality.

In AI-driven environments, stale content doesn’t just rank lower; it may disappear entirely from answer generation. LLMs are designed to minimize risk. Reusing outdated guidance, incorrect data, or obsolete frameworks is a liability. As a result, freshness has shifted from a “nice-to-have” SEO factor into a trust prerequisite for AI visibility.

This is especially relevant in zero-click AI search, where the model must select a small subset of sources it can confidently summarize or paraphrase. Freshness becomes a gating signal.

How AI detects fresh updates

LLMs do not crawl the web in real time like traditional search engines, but they do learn patterns that indicate whether content is being actively maintained.

At a high level, AI systems infer freshness through change detection logic rather than date validation. They look for signals that suggest a page is part of a living knowledge system instead of a static artifact.

Key detection mechanisms include:

Temporal consistency

Content that aligns with recent terminology, current practices and updated frameworks signals recency. For example, references to outdated tools or deprecated processes immediately lower freshness confidence.

Revision behavior patterns

Pages that show periodic refinement, expanded sections, clarified explanations, or updated examples demonstrate maintenance intent. One-off rewrites without follow-up often fail to sustain freshness signals.

Cross-source alignment

LLMs compare your claims with other trusted, recently updated sources. If your content diverges from the current consensus, recency confidence drops even if your page was updated yesterday.

Importantly, freshness is not binary. AI systems assign degrees of freshness confidence based on how many reinforcing signals are present.

Signals AI reads (dates, edits, feeds)

When evaluating content freshness AI models rely on multiple layers of signals, many of which are invisible to human readers.

1. Dates and timestamps

Visible “last updated” dates help, but only when they are credible. AI systems quickly learn to discount pages that update timestamps without meaningful content changes. Consistency between dates and actual edits matters more than the date itself.

2. Structural edits

LLMs are highly sensitive to structural change. Signals include:

- New subheadings added

- Sections expanded or reorganized

- Outdated paragraphs removed or reframed

These edits suggest that the author revisited the topic with intent, not automation.

3. Data and reference renewal

Replacing old statistics, retiring obsolete examples and introducing newer reference points significantly strengthen AI updates signals. Fresh data anchors content in the present.

4. Content feeds and update cadence

Blogs, documentation hubs and resource centers that publish related updates over time create a freshness ecosystem. AI models recognize sites that evolve topics instead of freezing them.

5. Semantic alignment with recent queries

If your content reflects how users currently ask questions, terminology, phrasing, and concerns, it signals active relevance. This is closely tied to recency ranking behavior in AI answers.

Frequency of updates LLMs prefer

One of the most common misconceptions is that frequent updates are always better. In reality, LLMs prefer predictable, topic-appropriate update rhythms.

There is no universal cadence, but patterns matter:

Evergreen technical content

Light updates every 3–6 months maintain freshness without destabilizing authority. These updates often involve clarification, expansion, or tool changes.

Rapidly evolving topics

Content tied to AI, security, policy, or platforms benefits from more frequent revision. Inconsistent updates here are a red flag.

Foundational explanations

Core concepts should evolve slowly. Over-updating foundational material can signal instability or uncertainty.

What LLMs penalize is not infrequent updates but neglect. Content that remains untouched while its surrounding ecosystem evolves loses freshness and trust over time.

A useful mental model:

Freshness is about responsiveness, not velocity.

Freshness score checklist

Use the following checklist to evaluate whether your content sends strong AI freshness signals:

- Does the content reflect current terminology and practices?

- Are examples, tools and references still valid today?

- Have sections been meaningfully edited, not just re-dated?

- Is the topic supported by related, recently updated content?

- Does the page align with the current user intent and phrasing?

- Are outdated assumptions explicitly removed or corrected?

- Does the update cadence match the topic’s rate of change?

If you cannot confidently answer “yes” to most of these, the page is likely carrying a low freshness score in AI systems even if it ranks in traditional search. For deeper alignment, connect freshness optimization with zero-click AI search strategies so your updates directly support answer eligibility.

For comparison with classic search freshness models, see Google Freshness Algorithms, but remember: AI freshness prioritizes reuse safety over crawl timing.

FAQs

How often should I update content?

Update frequency should match how quickly the topic evolves. Evergreen topics may need updates every 3–6 months, while fast-changing subjects require more frequent review.

Do LLMs care about published dates or last updated dates?

Dates help, but only when supported by real content changes. LLMs evaluate edits, structure and semantic relevance, not just timestamps.

Can updating old content improve AI visibility?

Yes. Meaningful updates can restore freshness, confidence and increase the likelihood that AI systems reuse your content in answers.

Is freshness more important than authority for AI ranking?

Freshness and authority work together. High-authority content can still be excluded if it becomes outdated or misaligned with current knowledge.