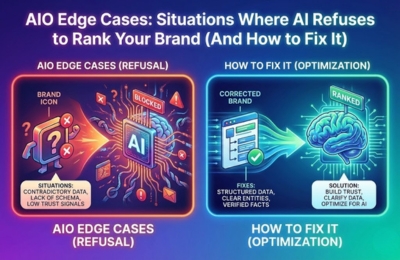

Even strong brands sometimes disappear from AI-generated answers. These AIO edge cases occur when AI systems detect trust gaps, conflicting signals, or over-optimization patterns that trigger silent suppression. This guide breaks down why AI refuses to cite certain brands and provides a practical, step-by-step recovery plan to regain visibility across AI-powered search and LLM responses.

AIO Edge Cases

AI-powered search engines don’t “rank” content the way traditional SERPs do. Instead, they select sources they trust enough to reuse in generated answers. This distinction creates a new category of problems, AIO edge cases, where your content technically exists, is crawlable and even performs well in classic SEO, yet AI systems consistently refuse to cite or reference your brand.

These situations are frustrating because there’s no manual penalty, no clear warning and no error message. AI simply chooses not to include you.

Understanding why this happens requires shifting from keyword-centric thinking to trust-centric analysis. Let’s break down the most common scenarios where AI suppresses content and how to fix them.

When AI Refuses to Cite You

The first sign of an AIO edge case is absence. Your brand doesn’t appear in AI answers, summaries, or comparisons, even when your content clearly addresses the query.

This typically shows up in platforms like ChatGPT, Gemini, Claude, or Perplexity, where competitors are repeatedly mentioned and you are ignored. Importantly, this isn’t always about quality. In many cases, AI recognizes your content but decides it’s unsafe, unclear, or unreliable to reuse.

AI systems are optimized to minimize risk. When uncertainty appears about accuracy, authority, or consistency, the model defaults to silence rather than citation.

This is where AI-suppressing content becomes a trust-based filtering decision, not a relevance issue.

Causes of Suppression

AI suppression rarely has a single cause. Most ranking errors emerge from layered signals that compound over time.

One major factor is ambiguity. If your content sends mixed messages about what your brand represents, what problem it solves, or how authoritative it is, AI struggles to categorize you confidently.

Another common cause is pattern deviation. Large language models learn from repeated structures, definitions and explanations across trusted sources. Content that deviates too far without clear framing can appear risky to reuse.

Finally, historical signals matter. AI systems learn from the broader web ecosystem. If your brand appears inconsistently, lacks reinforcement, or has unresolved contradictions across platforms, suppression becomes more likely.

Over-Optimization Issues

Ironically, trying too hard to optimize can trigger negative signals AIO.

Over-optimization in AIO doesn’t look like keyword stuffing alone. It includes excessive repetition of branded terms, aggressive semantic clustering without clarity, or artificial formatting designed purely to “force” AI extraction.

When AI detects content engineered more for manipulation than explanation, trust drops. LLMs are trained to identify natural language patterns. Content that feels mechanically structured, overly templated, or unnaturally dense with signals can be flagged internally as low-confidence.

In these cases, AI-suppressing content is a protective behavior. The model avoids citing sources that appear strategically inflated rather than informationally grounded.

The fix isn’t removing optimization, it’s restoring balance. Content must feel written for understanding first, extraction second.

Conflicting Entity Signals

One of the most overlooked AIO edge cases is entity conflict.

If your brand is described differently across pages, platforms, or formats, AI struggles to unify those references into a single, trusted entity. This includes mismatched descriptions of services, inconsistent terminology, or varying claims of expertise.

For example, if one page positions your brand as a consultant, another as a software provider and a third as a training platform without a clear hierarchy, AI hesitates to reuse any of it.

Conflicting entity signals are especially damaging in AI answers because LLMs prefer stable, well-defined concepts. When clarity is missing, the safest option is omission.

Resolving this requires alignment across your site, author profiles, structured data, and external mentions so AI can confidently understand who you are and why you’re authoritative.

Trust Gaps & Accuracy Issues

Trust is the final gatekeeper in AIO.

Even minor factual inconsistencies, outdated claims, or vague sourcing can create trust gaps. Unlike human readers, AI evaluates content at scale and cross-references it against learned patterns and known facts.

If your content contradicts widely accepted explanations or lacks precision where competitors are clear, the model may classify it as unreliable even if it’s mostly correct.

This is where internal processes like AI crawlability and LLM authority ranking play a role. Content that’s easy to parse, factually tight and structurally consistent is more likely to pass AI trust thresholds.

For deeper guidance on acceptable error handling and factual alignment, refer to the official error and safety documentation from OpenAI. Their guidelines help explain why models avoid uncertain sources and how accuracy impacts reuse.

Step-by-Step Recovery Plan

Recovering from AIO edge cases requires methodical correction, not aggressive publishing.

- Step 1: Audit suppression patterns. Identify which queries exclude you and which competitors are consistently cited. Look for structural or semantic differences, not just content length.

- Step 2: Normalize entity signals. Ensure your brand description, positioning, and terminology are consistent across all core pages and references.

- Step 3: Reduce over-optimization. Rewrite sections that feel engineered. Replace forced keyword usage with natural explanatory language and clearer definitions.

- Step4: Strengthen trust layers. Add precise explanations, remove ambiguity and ensure factual alignment across related content. Consistency matters more than volume.

- Step 5: Reinforce with internal clarity. Improve internal linking related to AI crawlability and authority pathways so models can better contextualize your expertise.

Recovery isn’t instant. AI systems require repeated exposure to corrected signals before trust is rebuilt, but when alignment is restored, visibility often returns stronger than before.

FAQs

Why doesn’t AI mention my brand?

AI avoids citing brands when it detects uncertainty, conflicting signals, or trust gaps. This often happens due to over-optimization, unclear positioning, or inconsistent entity information.

Can AI suppress content even if SEO rankings are strong?

Yes. Traditional SEO performance doesn’t guarantee AI inclusion. AI systems prioritize trust, clarity and reusability over rankings alone.

How long does it take to recover from AIO suppression?

Recovery timelines vary, but consistent corrections typically show improvement over several weeks as models re-encounter aligned signals.

Is over-optimization a real risk in AIO?

Absolutely. Excessive structuring, forced semantics, or unnatural language patterns can trigger negative signals that AIO systems actively avoid.