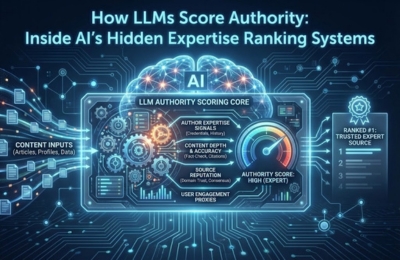

Large Language Models don’t rank content the way Google’s classic algorithms do. LLM authority ranking is based on how consistently, accurately and repeatedly your expertise shows up across the web over time. AI evaluates entity strength, social validation, contextual mentions, factual stability and historical footprint to decide whether your content is trusted enough to be reused in answers. This guide breaks down how those hidden authority systems work and how to align with them.

How AI Scores Authority

Authority inside AI systems is not about backlinks alone, keyword density, or publishing volume. Modern LLMs evaluate expertise probability, how likely a source is to provide reliable, reusable knowledge across multiple contexts.

Instead of ranking pages, LLMs rank sources of truth. That includes brands, authors, organizations and domains as entities. When AI answers a query, it doesn’t ask “who ranks first?” It asks:

- Who has demonstrated stable expertise over time?

- Who is mentioned consistently in relevant contexts?

- Whose information remains accurate across repeated references?

This is the foundation of LLM authority ranking, a hidden but powerful system that determines whether your insights get reused, summarized, or ignored inside AI-generated answers.

Entity strength factors

At the core of AI authority scoring is entity evaluation. LLMs rely heavily on entity-based understanding rather than isolated pages.

Strong entity signals include:

- Clear definition of who you are and what you specialize in

- Consistent naming across websites, author bios, schema and profiles

- Strong topical alignment between entity and subject matter

- Clean associations between your brand and core topics

For example, an entity repeatedly associated with “AI optimization,” “LLM behavior,” and “semantic search” builds stronger authority than one publishing randomly across unrelated themes.

This is where entity SEO becomes foundational. When entities are clearly defined, AI can confidently map expertise and reuse insights without ambiguity.

Social validation signals

LLMs do not browse social media like humans, but they do ingest social validation as indirect trust signals through training data and real-time retrieval systems.

Key validation indicators include:

- Citations in expert discussions, blogs and interviews

- Mentions in industry analysis or research commentary

- Quotes reused across multiple independent sources

- Consensus alignment with other trusted voices

What matters is not virality, but repetition with credibility. When AI observes multiple authoritative sources referencing similar insights from the same entity, confidence increases.

This is why authority is often cumulative. One strong article rarely moves the needle, but a consistent expert presence across platforms does.

Mention frequency & context

Not all mentions are equal. LLMs evaluate how and where an entity is mentioned.

High-value mention patterns include:

- Appearing in explanatory or educational contexts

- Being cited as a source of insight, not just listed

- Repeated association with the same expertise domain

- Mentions that align semantically with core topics

Low-value patterns include generic listings, irrelevant references, or conflicting topical associations.

This is a critical difference between traditional SEO and AI systems. LLMs measure contextual relevance, not just link signals. Mention frequency only matters when context reinforces expertise.

Accuracy + stability signals

Accuracy is not a one-time check; it’s longitudinal.

LLMs track patterns such as:

- Whether claims remain consistent across time

- Whether facts align with broader knowledge graphs

- Whether updates contradict previous statements

- Whether explanations remain stable across formats

When AI detects frequent contradictions, shifting narratives, or uncorrected inaccuracies, authority scores decline even if traffic is high.

This is why trust evaluation is continuous. AI doesn’t “penalize” content; it simply stops reusing sources that introduce uncertainty.

Historical footprint

Authority is earned slowly and lost quickly.

LLMs strongly favor entities with:

- Long-standing topical presence

- Consistent publishing history in one domain

- Gradual evolution rather than abrupt pivots

- Minimal content volatility

A historical footprint shows AI that expertise is earned, not manufactured. New brands can still build authority, but they must demonstrate consistency faster and more clearly.

This is also where platforms like Google AI Overviews surface trusted entities repeatedly reinforcing authority loops inside AI systems.

Authority boosting checklist

To align with LLM authority ranking systems, ensure the following foundations are in place:

- Define and reinforce a single, clear entity identity

- Publish deeply within a focused expertise area

- Maintain factual consistency across all content

- Build contextual mentions, not random citations

- Update content without contradicting core positions

- Strengthen historical continuity over time

Authority is not optimized; it is demonstrated.

FAQs

What builds AI authority?

AI authority is built through consistent expertise signals clear entity definition, repeated contextual mentions, factual accuracy, and a stable historical footprint across trusted sources.

How do LLMs evaluate trust?

LLMs evaluate trust by comparing consistency, accuracy, and consensus across multiple independent data sources over time.

Can new brands rank for authority in AI?

Yes, but new brands must demonstrate focused expertise quickly and avoid contradictory or scattered content signals.

Is AI authority the same as SEO authority?

No. SEO authority relies heavily on links and rankings, while AI authority prioritizes entity clarity, semantic alignment and long-term trust signals.